1 Definitions

| Term | Definition |

| NCT-Key | The set of Neural Network parameter indices of the randomly selected locations. |

| Original Fingerprint | The sequence of values obtained using the NCT tracking procedure on the unmodified parameters. |

2 Description of the tracking procedure

The No-Constraint Traceability (NCT) method maps selected positions of the parameters of a specific NN into a secret data structure (the key). In case of fingerprinting, the fingerprint is made of the parameters pointed at by the secret data structure. In case of watermarking, the parameters pointed at by the secret data structure are watermarked.

The No-Constraint Traceability (NCT) method defines a procedure in which an ordered subset of neural network parameters is selected through a prescribed selection mechanism. Let denote the ordered set of selected parameter indices. A mapping function transforms into a secret data structure, the NCT-Key, defined as K=f(I), which provides an obfuscated representation of the selected parameter positions.

In fingerprinting mode, the NCT-Key is used, together with the inverse mapping, to reconstruct the index sequence and to extract from the model the corresponding ordered parameter values. The resulting sequence constitutes the fingerprint and reflects the unmodified state of those parameters.

In watermarking mode, a watermarking function is applied to the parameter tuple indexed by , producing a modified parameter vector. The NCT-Key is then used to extract the corresponding modified values, yielding the watermarked parameters.

The same NCT-Key structure is used in both modes, ensuring a unified and consistent traceability framework.

3 NCT workflow

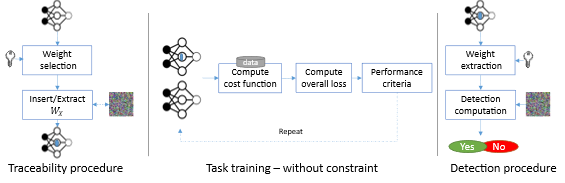

The NCT workflow is illustrated in Figure 3.

The traceability procedure can be performed at any moment of the training of the NN including its initialization.

The Original Traceability Data can be computed at any time of model training using its parameters (white-box method) and the NCT-Key. The verification can be done at any stage of the workflow by extracting the Traceability Data and computing a correlation between the Original Traceability Data and current Traceability Data.

Figure 3.Workflow of No-Constraint traceability technology.

4 Experimental Conditions

For performance evaluation, the models, the datasets and the application domains mentioned in subsection 6.1 and the three evaluation types presented in subsection 6.2 are used.

The following parameters are specific to the NCT method:

- a is the epoch of the training at which the watermark is inserted,

- N is the number of parameters that are included in the Mark.

5 Evaluation of Imperceptibility impact

This section is relevant for active traceability technologies (watermarking), as the passive traceability technologies do not impact Imperceptibility.

Table 2 and Table 3 report the imperceptibility of NCT applied to 5 watermarked NNs.

The impact of different a and N is evaluated for the image classification task (Table 2). Then (Table 2) evaluate the Imperceptibility impact for a=0 and N=256 for the up-sampling, image generation and semantic image segmentation tasks.

The experimental results are obtained using the experimental conditions of section 6. Each row provides the Impact of the Tracking procedure on the performance of the Neural Network and the Pearson correlation (corr) for a given (NN model, a and N) configuration. Impact is defined as:

Impact= |Performanceuwm– Performancewm|/Performanceuwm

where Performance is one of Top-1 Accuracy, PSNR, SSIM, mIoU (depending on the task) presented in subsection 6.1. Impact will be multiplied by 100 to be read as a percentage. The indices uwm and wm stand for the unwatermarked and the watermark models, respectively.

Table 2 presents the impact of the inserted watermark on VGG16 and ResNet8 models. For all given configurations, the watermark is successfully retrieved at 5% significance level based on the Spearman correlation.

Table 2. Imperceptibility evaluation for image classification task.

| Configuration | Impact | ||

| Model | a | N | Top-1 accuracy |

| VGG16 | 0 | 64 | 0 |

| 0 | 512 | 6 | |

| 0 | 4096 | 4 | |

| 0 | 16144 | 14 | |

| 5 | 64 | 1 | |

| 5 | 512 | 5 | |

| 5 | 4096 | 0 | |

| 5 | 16144 | 5 | |

| 50 | 64 | 5 | |

| 50 | 512 | 3 | |

| 50 | 4096 | 5 | |

| 50 | 16144 | 2 | |

| 90 | 64 | 3 | |

| 90 | 512 | 1 | |

| 90 | 4096 | 3 | |

| 90 | 16144 | 1 | |

| ResNet8 | 0 | 64 | 0 |

| 0 | 512 | 6 | |

| 0 | 4096 | 3 | |

| 0 | 16144 | 1 | |

| 5 | 64 | 11 | |

| 5 | 512 | 6 | |

| 5 | 4096 | 8 | |

| 5 | 16144 | 3 | |

| 50 | 64 | 11 | |

| 50 | 512 | 8 | |

| 50 | 4096 | 5 | |

| 50 | 16144 | 26 | |

| 90 | 64 | 13 | |

| 90 | 512 | 2 | |

| 90 | 4096 | 30 | |

| 90 | 16144 | 175 | |

Table 3 presents the impact of the inserted watermark on DeepLabV3, RDN and pix2pix models. For this table, the NCT parameters are fixed to a=0 and N=512 and the watermark is successfully retrieved at 5% significance level based on the Spearman correlation.

Table 3. Imperceptibility evaluation for three tasks (Semantic Segmentation, Up-Sampling, Generative)

| Task | Model | Impact | |

| Semantic segmentation | DeepLabV3 | mIoU = 8.7 | |

| Up-Sampling | RDN | PSNR = 0 | SSIM = 0 |

| City scene generation | pix2pix | PSNR = 3.2 | SSIM = 3.4 |

6 Evaluation of Robustness impact

This subsection provides the result of NCT Robustness against Gaussian noise addition, fine-tuning, pruning, quantization, and Watermark Overwriting. For those experiment N is fixed to 512.

The following tables Table 4 and Table 5 provide the robustness evaluation against Gaussian noise addition modification, for the above-mentioned NN models. Each row in both tables provides the relative error compared to the un-modified model and the computed correlation (corr) for a given attack, in a similar way as in equation 1. The modification compresses the model by adding a Gaussian noise of a zero-mean, and the ratio S ∈ {.001,.005,.01,.05,.1,.5,1} defined as in the Modification table of [2] to all layers.

The values in Table 4 and Table 5 show that the watermark is successfully detected at 5% significance level based on the Spearman correlation.

Table 4. Robustness of NCT against Gaussian noise addition for the VGG16 model.

| S | VGG16 | |||||||

| a=0 | a=5 | a=50 | ||||||

| corr | error | corr | error | corr | error | corr | error | |

| .001 | 0.999 | 0.1 | 0.999 | 1 | 0.999 | 0.003 | 0.999 | 0.8 |

| .005 | 0.999 | 0.8 | 0.999 | 1 | 0.999 | 0.005 | 0.999 | 0 |

| .01 | 0.999 | 0.7 | 0.999 | 0.7 | 0.999 | 0.009 | 0.999 | 0.6 |

| .05 | 0.999 | 0.7 | 0.999 | 1.2 | 0.999 | 0.029 | 0.999 | 0.9 |

| .1 | 0.999 | 0.9 | 0.999 | 1.5 | 0.999 | 0.029 | 0.999 | 2.3 |

| .5 | 0.999 | 85.3 | 0.999 | 100 | 0.999 | 0.744 | 0.999 | 81.2 |

| 1 | 0.997 | 513 | 0.996 | 530 | 0.998 | 6.571 | 0.998 | 600 |

Table 5. Robustness of NCT against Gaussian noise addition for the ResNet8 model.

| S | ResNet8 | |||||||

| a=0 | a=5 | a=50 | a=90 | |||||

| corr | error | corr | error | corr | error | corr | error | |

| .001 | 0.712 | 0 | 0.821 | 0.1 | 0.982 | 0 | 0.999 | 0.1 |

| .005 | 0.712 | 1.3 | 0.821 | 0 | 0.982 | 0 | 0.999 | 0.2 |

| .01 | 0.712 | 0.6 | 0.820 | 0.3 | 0.982 | 0 | 0.999 | 0.8 |

| .05 | 0.710 | 7.1 | 0.824 | 2 | 0.982 | 01.7 | 0.999 | 1 |

| .1 | 0.711 | 10 | 0.819 | 7.5 | 0.981 | 11.4 | 0.998 | 8.5 |

| .5 | 0.681 | 263 | 0.759 | 247 | 0.949 | 47.5 | 0.979 | 204 |

| 1 | 0.625 | 364 | 0.610 | 402 | 0.871 | 63.5 | 0.887 | 355 |

The following tables Table 6 and Table 7 provide the robustness evaluation against fine-tuning modification, for the above-mentioned NN models. Each row in both tables provides the relative error compared to the un-modified model and the computed correlation (corr) for a given attack. The modification resumes the training for E ∈ [1,10] additional epochs.

The values of Table 6 and Table 7 show that the watermark is successfully detected at 5% significance level based on the Spearman correlation.

Table 6. Robustness of NCT against fine-tuning for the VGG16 model.

| E | VGG16 | |||||||

| a=0 | a=5 | a=50 | a=90 | |||||

| corr | error | corr | error | corr | error | corr | error | |

| 1 | 0.998 | 0 | 0.999 | 0 | 0.999 | 0 | 0.999 | 0 |

| 3 | 0.998 | 0 | 0.999 | 0 | 0.999 | 0 | 0.999 | 0 |

| 5 | 0.998 | 0 | 0.999 | 0 | 0.999 | 0 | 0.999 | 0 |

| 7 | 0.998 | 0 | 0.999 | 0 | 0.999 | 0 | 0.999 | 0.1 |

| 10 | 0.998 | 0 | 0.999 | 0.1 | 0.999 | 0 | 0.999 | 0.1 |

Tablle 7. Robustness of NCT against fine-tuning for the ResNet8 model.

| E | ResNet8 | |||||||

| a=0 | a=5 | a=50 | a=90 | |||||

| corr | error | corr | error | corr | error | corr | error | |

| 1 | 0.713 | 0 | 0.821 | 0 | 0.983 | 0 | 0.999 | 0 |

| 3 | 0.713 | 0 | 0.821 | 0 | 0.982 | 0 | 0.999 | 0 |

| 5 | 0.713 | 0 | 0.821 | 0 | 0.982 | 0 | 0.999 | 0 |

| 7 | 0.713 | 0 | 0.821 | 0.1 | 0.982 | 0 | 0.999 | 0 |

| 10 | 0.712 | 0 | 0.821 | 0.1 | 0.982 | 0 | 0.999 | 0.1 |

The following tables Table 6 and Table 7 provide the robustness evaluation against quantization modification, for the above-mentioned NN models. Each row in both tables provides the relative error compared to the un-modified model and the computed correlation (corr) for a given attack. The modification compresses the model by reducing the number of bits B ∈ [2,16] of the floating representation of the parameters.

The values of Table 6 and Table 7 show that the watermark is successfully detected at 5% significance level based on the Spearman correlation.

Table 8. Robustness of NCT against quantization for the VGG16 model.

| B | VGG16 | |||||||

| a=0 | a=5 | a=50 | a=90 | |||||

| corr | error | corr | error | corr | error | corr | error | |

| 16 | 0.998 | 0 | 0.999 | 0 | 0.999 | 2 | 0.999 | 0 |

| 8 | 0.998 | 0 | 0.999 | 1 | 0.999 | 1 | 0.999 | 0 |

| 6 | 0.998 | 1 | 0.999 | 1 | 0.999 | 1 | 0.999 | 1 |

| 4 | 0.993 | 841 | 0.993 | 734 | 0.994 | 814 | 0.99 | 765 |

| 2 | 0.883 | 841 | 0.880 | 734 | 0.882 | 814 | 0.882 | 765 |

Table 9. Robustness of NCT against quantization for the ResNet8 model.

| B | ResNet8 | |||||||

| a=0 | a=5 | a=50 | a=90 | |||||

| corr | error | corr | error | corr | error | corr | error | |

| 16 | 0.748 | 0 | 0.716 | 0 | 0.992 | 0 | 0.999 | 0 |

| 8 | 0.748 | 0 | 0.716 | 1 | 0.992 | 0 | 0.999 | 0 |

| 6 | 0.747 | 2 | 0.718 | 1 | 0.993 | 0 | 0.998 | 2 |

| 4 | 0.744 | 34 | 0.709 | 23 | 0.987 | 8 | 0.993 | 41 |

| 2 | 0.644 | 341 | 0.643 | 317 | 0.863 | 161 | 0.876 | 281 |

The following tables Table 10 and Table 11 provide the robustness results against the pruning modification. Each row in such a table provides the relative error compared to the un-modified model and the computed correlation (corr) for a given attack. The modification sets to zero a percentage P ∈ [10,90] of the parameters having the smallest absolute values, as described in the Modification table of [2].

The values of Table 10 and Table 11 show that the watermark is successfully detected at 5% significance level based on the Spearman correlation.

Table 10. Robustness of NCT against magnitude pruning for the VGG16 model.

| P | VGG16 | |||||||

| a=0 | a=5 | a=50 | a=90 | |||||

| corr | error | corr | error | corr | error | corr | error | |

| 10 | 0.998 | 0 | 0.996 | 0 | 0.999 | 1 | 0.999 | 1 |

| 20 | 0.998 | 0 | 0.999 | 0 | 0.999 | 1 | 0.999 | 1 |

| 50 | 0.998 | 12 | 0.999 | 9 | 0.999 | 11 | 0.999 | 9 |

| 80 | 0.998 | 586 | 0.999 | 350 | 0.999 | 525 | 0.999 | 288 |

| 90 | 0.998 | 841 | 0.999 | 730 | 0.999 | 814 | 0.999 | 764 |

Table 11. Robustness of NCT against magnitude pruning for the ResNet8 model.

| P | ResNet8 | |||||||

| a=0 | a=5 | a=50 | a=90 | |||||

| corr | error | corr | error | corr | error | corr | error | |

| 10 | 0.748 | 0 | 0.716 | 1 | 0.992 | 0 | 0.999 | 0 |

| 20 | 0.748 | 4 | 0.716 | 2 | 0.990 | 5 | 0.998 | 2 |

| 50 | 0.750 | 47 | 0.714 | 100 | 0.985 | 125 | 0.993 | 94 |

| 80 | 0.732 | 272 | 0.681 | 315 | 0.964 | 313 | 0.978 | 266 |

| 90 | 0.732 | 327 | 0.654 | 337 | 0.940 | 346 | 0.964 | 294 |

The last study on the robustness focuses on the watermark overwriting Modification. Fourth mark of the same length ( ) denoted by 1st mark, 2nd mark, 3rd mark, 4th mark, and 5th mark are subsequently inserted at epoch 0. Each of these marks can be associated with the five Actors: Architect, Trainer, Tracker, Distributor, and Generic user. Under this setup, the last 4 marks can be considered as overwriting attacks over the 1st mark.

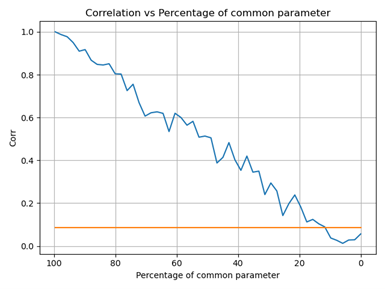

By design, NCT allows to insert multiple watermarks. The experiments in Figure 4 shows the impact of inserting another watermark at the same looking at the number of parameters that are shared among the watermarks.

Figure 4. Correlation of the inserted mark against the percentage of parameters replaced by another mark. The y=0.018 corresponds to the threshold of detection at 5% significance level based on the Spearman correlation

7 Evaluation of Computational cost

The NCT method does not impact the memory footprint.

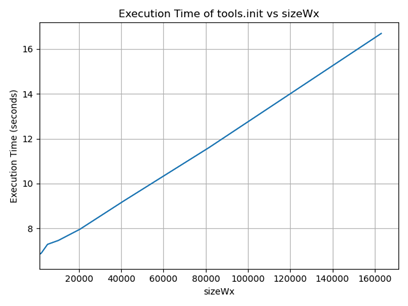

For the active technique the insertion phase consists of randomly selecting a set of positions within a matrix and modifying the corresponding entries. The insertion is not computationally expensive because each insertion involves only substitution operations, as illustrated in Figure 5.

The detection phase involves extracting the values located at the same selected matrix positions and computing a correlation measure between the extracted values and the reference traceability data. the overall detection process has a time complexity of O(n log n), where n is the size of the NCT-Key ( ).

Figure 5. Execution time of the insertion procedure compared to the number of watermarked parameters N