| 1. Function | 2. Reference Model | 3. Input/Output Data |

| 4. SubAIMs | 5. JSON Metadata | 6. Profiles |

| 7. Reference Software | 8. Conformance Testing | 9.Performance Assessment |

1. Function

The User State Description (PGM-USD) AIM derives a description of the User’s observable state by interpreting enriched audio‑visual scene information and cross‑modal correspondence evidence.

USD operates on enhanced scene descriptors and alignment evidence produced by Space and User Description and applies evidence‑based reasoning under directive control to construct a User Entity State suitable for downstream reasoning, control, and personalization.

2. Reference Model

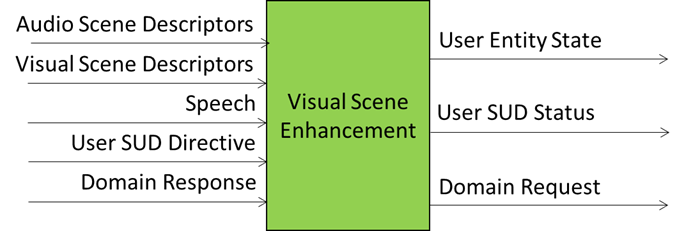

Figure 1 gives the Reference Model of User State Description (PGM-USD) AIM.

Figure 1 – Reference Model of User State Description (PGM-USD) AIM.

The User State Description reference model consists of an evidence‑driven interpretative pipeline operating on enriched perceptual inputs.

At a conceptual level, USD performs the following functions:

- Multimodal Evidence Integration: Integration of enhanced audio descriptors, enhanced visual descriptors, and audio‑visual scene geometry to establish user‑centric evidence.

- Linguistic and Paralinguistic Analysis: Interpretation of textual input (e.g. ASR output) and associated audio evidence to extract communicative cues relevant to user state.

- Behavioural and Expressive Analysis: Interpretation of visual and audio evidence to derive observable behavioural and expressive indicators.

- Entity State Construction: Evidence‑based construction of a User Entity State under the constraints imposed by directives and policies.

- Output Packaging and Provenance: Packaging of the constructed User Entity State together with status and provenance metadata.

The reference model explicitly separates evidence extraction, interpretation, and state construction, ensuring traceability, auditability, and modularity.

3. Input/Output Data

Table 1 – Input and Output Data of User State Description (PGM-USD) AIM

| Input Data | Description |

|---|---|

| Enhanced Audio Scene Descriptors | Audio Scene Descriptors augmented with derived and semantic properties by Audio Scene Enhancement. |

| Enhanced Visual Scene Descriptors | Visual Scene Descriptors augmented with derived and semantic properties by Visual Scene Enhancement. |

| Speech | Speech component of Enhanced Audio Scene Descriptors. |

| User SUD Directive | Control directives specifying scope, depth, or policy constraints for user state interpretation. |

| User Domain Request | Domain‑specific knowledge supporting user state interpretation and constraint enforcement. |

| Output Data | Description |

| User Entity State | Structured description of the User’s observable state derived from multimodal evidence. |

| User SUD Status | Status information describing the execution and outcome of User State Description processing. |

| User Domain Response | Response to domain‑specific knowledge request. |

3. SubAIMs (Informative)

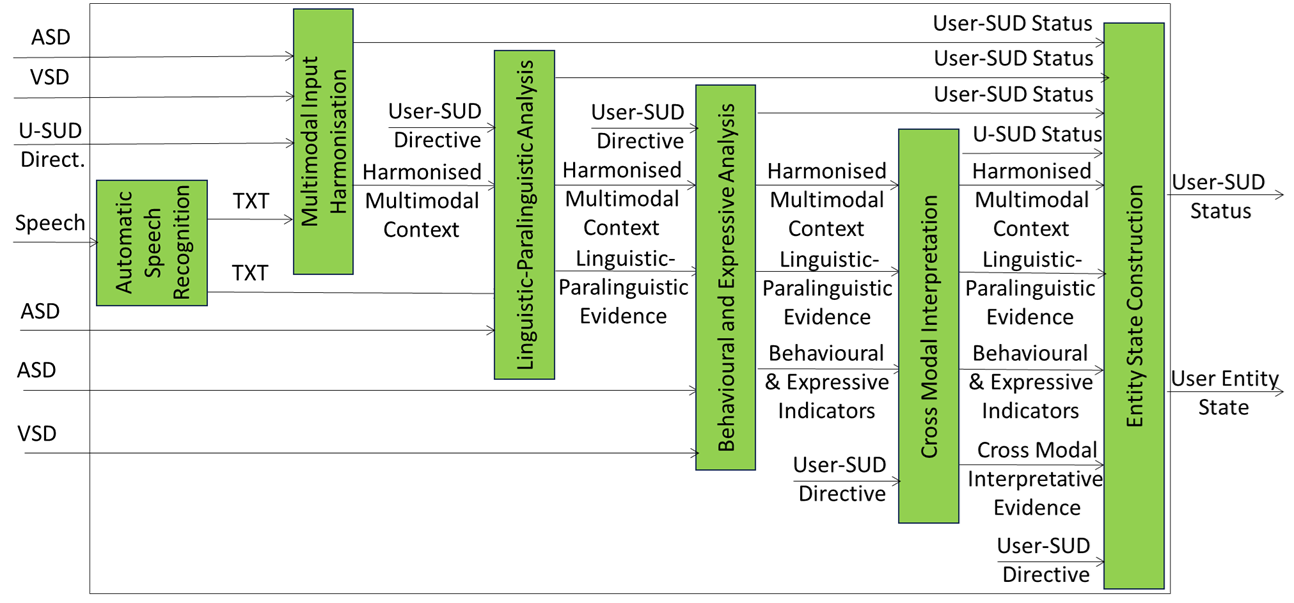

User State Description (PGM-USD) AIM implementation may adopt the architecture of Figure 2.

Figure 2 – Reference Model of User State Description (PGM-USD) Composite AIM

A User State Description (PGM-USD) AIM adopting the architecture of Figure 2, will use the Input and Output Data of Table 2.

Table 2 – Input and Output Data of the User State Description (PGM-USD) Composite AIM’s SubAIMs.

| SubAIM Specification | Purpose | Input Data | Output Data |

|---|---|---|---|

| Automatic Speech Recognition | Converts speech input into textual representation suitable for downstream multimodal processing. | Speech | TXT |

| Multimodal Input Harmonisation | Aligns audio, visual, and textual inputs into a harmonised multimodal context without semantic interpretation. | ASD VSD TXT User‑SUD Directive |

Harmonised Multimodal Context |

| Linguistic–Paralinguistic Analysis | Extracts linguistic and paralinguistic evidence from harmonised multimodal context. | Harmonised Multimodal Context User‑SUD Directive Domain RS |

Linguistic‑Paralinguistic Evidence |

| Behavioural and Expressive Analysis | Derives behavioural and expressive indicators of the User from multimodal evidence. | Harmonised Multimodal Context User‑SUD Directive Domain RS |

Behavioural & Expressive Indicators |

| Cross‑Modal Interpretation | Integrates linguistic and behavioural evidence into cross‑modal interpretative evidence under directives and domain constraints. | Linguistic‑Paralinguistic Evidence Behavioural & Expressive Indicators User‑SUD Directive Domain RS |

Cross‑Modal Interpretative Evidence |

| Entity State Construction | Constructs the User Entity State from cross‑modal interpretative evidence. | Cross‑Modal Interpretative Evidence User‑SUD Directive |

User Entity State User‑SUD Status |

5. JSON Metadata

https://schemas.mpai.community/PGM1/V1.0/AIMs/UserStateDescription.json

6. Profiles

7. Reference Software

8. Conformance Testing

9. Performance Assessment