The full range of MPAI activities, results, and plans presented

MPAI publishes Metaverse Model – Functionality Profiles

MPAI approves Context-based Audio Enhancement V2

Meetings in the coming March-April meeting cycle

The full range of MPAI activities, results, and plans presented

MPAI has presented the range of activities it conducts, the results obtained and its plans at an event held on the 31st of March (see the powerpoint presentations and the video recordings). A big event lasting more than ten hours where 16 speakers have presented how practical standards for AI can be developed that apply AI to a vast range of applications.

MPAI publishes Metaverse Model – Functionality Profiles

Metaverse is a still not well-defined notion but it is clear that it will enable new and important forms of communication impacting industry and consumers. Standards are needed to enable interoperability between different metaverse instances and the MPAI-MMM project is the MPAI response to that need.

Read the Technical Report as pdf file or as html pages, see a concise description of the Technical Report as Powerpoint file, or watch the YouTube – non-YouTube video of the 7 April presentation.

Achieving metaverse interoperability is difficult fpr several reasons:

- There is no common understanding of what a “metaverse” is or should be in detail.

- There are many potential metaverse use cases.

- There are already successful independent metaverse implementations.

- Some important technologies enabling more advanced and unforeseen forms of metaverse may be uncovered in the next several years.

MPAI has developed a metaverse standard roadmap whose first milestone is based on the idea of collecting the functionalities that potential metaverse users expect the metaverse to provide, instead of trying to define what the metaverse is. See Technical Report – MPAI Metaverse Model – Functionalities.

Potential metaverse users with different needs are likely to require different technologies. Therefore, if any Metaverse Instance should be interoperable with any other Metaverse Instance, implementers would be forced to take on board technologies they do not need and are potentially costly.

MPAI assumes that metaverse standardisation should be based on profiles, an approach that has been used to deal with similar situations. This will enable metaverse implementers to select the profile providing the functionalities they need.

So far, the notion of profile has been applied to technologies, but some key metaverse technologies are not yet available and at this time it is not clear which technologies, whether existing or not, will eventually be selected. Therefore, the second milestone of the MPAI roadmap targets Functionality Profiles, i.e., profiles that are defined by the functionalities they offer, not by the technologies implementing them.

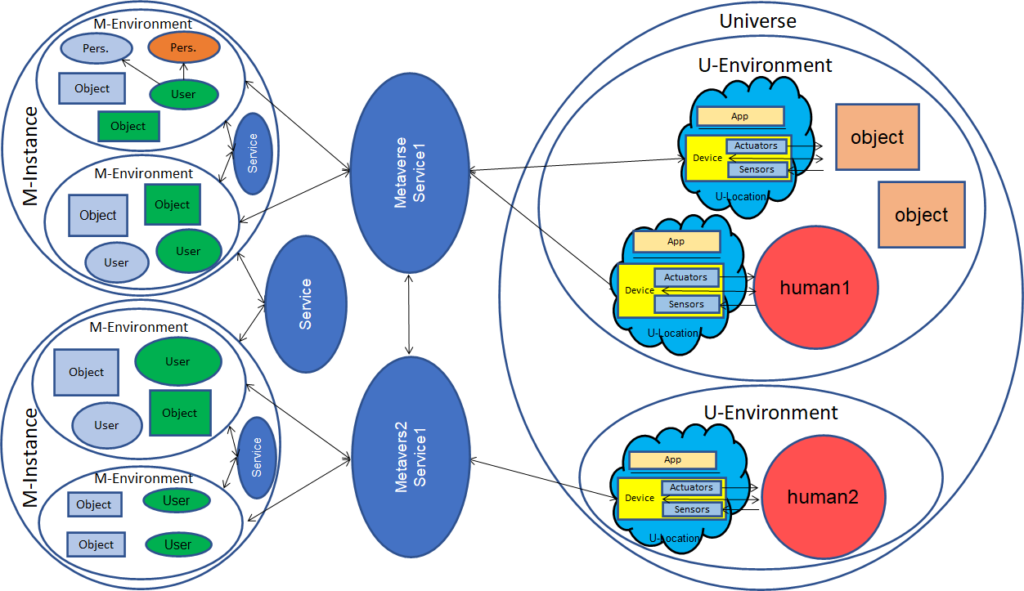

Figure 1 depicts the basic elements of the metaverse instances considered by the draft Technical Report – MPAI Metaverse Model – Functionality Profiles approved for publication. The document is not final because Community Comments will be collected until the 17th of April. Figure 1 illustrates the fact that Metaverse Servers and Devices enable the connection between real world humans and objects with Metaverse Environment Users and Objects. Users can show up as Personae that are replicas of humans or autonomous agents. If interoperability and business considerations permit, a human may join as a User in a Metaverse Instance that is not the one s/he is registered with.

Figure 1 – A Metaverse scenario of MPAI-MMM Functionality Profiles

The Technical Report defines the infrastructure enabling the different elements of a metaverse instance – Users, Devices, and Services – request that other elements of the same or different metaverse instance execute actions, such as locating and animating an avatar. The infrastructure is enabled by 3 main entities: Actions, i.e., functions performed on Item, i.e., entities including object, models, assets etc., and Data Types used by Actions and Items.

The document examines 7 use cases to test the ability of the infrastructure to support the implementation of the use cases and identifies 4 functionality profiles.

The document is publicly available. Anybody can send comments to the MPAI Secretariat by the 17th of April 2023.

Support media: pdf file, Powerpoint file, video presentation YouTube – non-YouTube (2023/04/07).

MPAI approves Context-based Audio Enhancement V2

Version 1 of Context-based Audio Enhancement dates to December 2021 when it first was approved. As for other MPAI standards, it is organised in use cases having the common characteristic of using context information to improve the user experience of audio-related applications, e.g., for entertainment, teleconferencing, and restoration etc. in a variety of contexts such as in the home, in the car, on-the-go, in the studio etc. The supported use cases are Emotion Enhanced Speech, Audio Recording Preservation, Speech Restoration System and Enhanced Audioconference Experience.

Version 1 enhances the audioconference experience by making available the description of the audio scene that may include several person in an audioconference room. Version 2 extends the audio scene description to other use cases, in particular the outdoor context of a group of users verbally interacting with an autonomous vehicle.

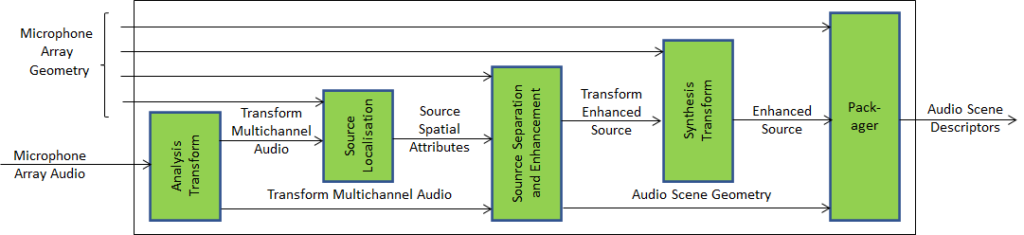

Version 2 introduces a so-called Composite AI Module that receives the audio signals from a microphone array with the geometry of the microphone array and produces Audio Scene Descriptors.

Figure 2 – Audio Scene Description Composite AIM

The current version of the standard provides the individual audio sources with their directions.

Meetings in the coming March-April meeting cycle

| Group name | 20-24 Mar | 27-31 Mar | 03-07 Apr | 10-14 Apr | 17-21 Apr | Time (UTC) | |

| AI Framework | 27 | 3 | 10 | 17 | 15 | ||

| AI-based End-to-End Video Coding | 29 | 12 | 14 | ||||

| AI-Enhanced Video Coding | 5 | 19 | 14 | ||||

| Artificial Intelligence for Health Data | 7 | 14 | |||||

| Avatar Representation and Animation | 30 | 6 | 13 | 13:30 | |||

| Communication | 30 | 13 | 15 | ||||

| Connected Autonomous Vehicles | 19 | 13 | |||||

| 29 | 5 | 12 | 15 | ||||

| Context-based Audio enhancement | 28 | 4 | 18 | 16 | |||

| Governance of MPAI Ecosystem | 11 | 17 | |||||

| Industry and Standards | 7 | 16 | |||||

| MPAI Metaverse Model | 24 | 7 | 14 | 21 | 15 | ||

| Multimodal Conversation | 28 | 4 | 11 | 18 | 14 | ||

| Neural Network Watermaking | 28 | 4 | 11 | 18 | 15 | ||

| Server-based Predictive Multiplayer Gaming | 30 | 6 | 13 | 14:30 | |||

| XR Venues | 28 | 4 | 11 | 18 | 18 | ||

| General Assembly (MPAI-31) | 19 | 15 |

Non-MPAI members may join the meetings given in italics in the table below. If interested, please contact the MPAI secretariat.