Highlights

- A new look at the MPAI “Assets”

- A look at the coming MPAI-HMC new standard

- Meetings in the coming January-February meeting cycle

A new look at the MPAI “Assets”

What are the assets of the MPAI standards development organisation? Nothing physical of course, only intellectual, and specifically Technical Specifications and related documents. Currently, MPAI has 9 Technical specifications addressing execution of AI applications, audio, autonomous vehicles, finance, governance, multimodal conversation, objects and scenes, and avatars. In less than a month, it will add one on human-machine communication bringing the total MPAI Assets to ten standards.

Is that the whole story?

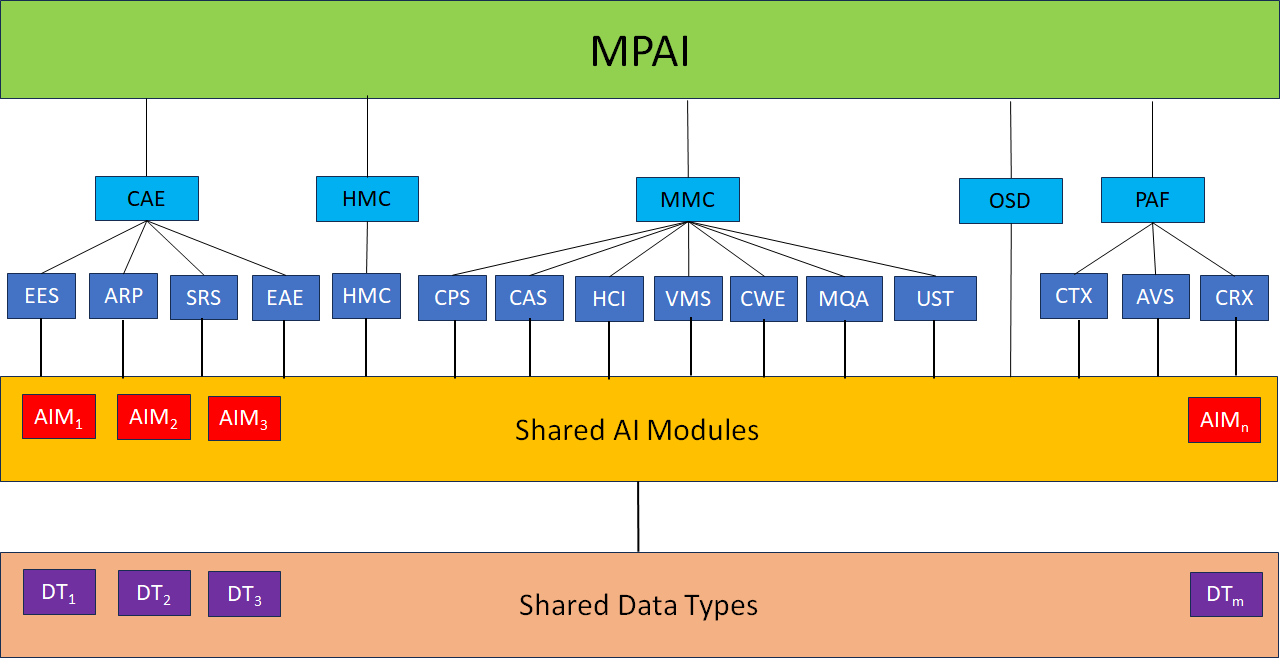

Until recently, the answer would have been yes. No more so after the last General Assembly (MPAI-40) approved one new standard – Object and Scene Description (MPAI-OSD) – and two extensions to existing standards – Multimodal Conversation (MPAI-MMC) and Portable Data Format (MPAI-PAF). They are all based on the notion of AI Workflow (AIW) composed of interconnected AI Modules (AIM) executed in the AI Framework (AIF) as specified by the MPAI-AIF standard.

What is the advantage in doing so? Unlike the standards developed had so far, there is now only one AI Module chapter or one Data Type chapter referenced by the three standards.

The same approach has been applied to Human and Machine Communication (MPAI-HMC), a draft standard that has been published with a request for Community Comments. Interestingly, this new standard does not define any new Data Type and only one new AI Module and specifies one Composite AIM, i.e., an AIM that includes interconnected AIMs. The new combination of AIM specified by MPAI-HMC offers new ways for humans and machine to interact with humans and machines.

This is represented in Figure 1.

Figure 1 – The new organization of MPAI-AIF -based standards

The five turquoise blocs represent the standards. The blue blocks below the use cases. The rectangle below includes all AI Modules, and the one at the bottom the Data Types.

These 4 standards are not the only ones to which this approach can be applied. Compression and Understanding of Financial Data is one and, out of the standards under development, likely candidates are Extended Reality Venues and Connected Autonomous Vehicle – Data.

Component-based software engineering is a software engineering style that aims to build software out of modular components. MPAI is implementing this notion to the world of standards.

See the links below and enjoy:

MPAI-HMC: https://mpai.community/standards/mpai-hmc/mpai-hmc-specification/

MPAI-MMC: https://mpai.community/standards/mpai-mmc/mpai-mmc-specification/

MPAI-OSD: https://mpai.community/standards/mpai-osd/mpai-osd-specification/

MPAI-PAF: https://mpai.community/standards/mpai-paf/mpai-paf-specification/

Is this the end of the story? No. As reported above, MPAI-HMC does not require any new Data Type and only one new AI Module. Still, the functionalities of some AI Modules benefit from the use of additional input and out data, while retaining the AIM functionality. One way to solve this issue is by introducing the notion of AIM Profile.

This is a new area of work. Stay tuned for more information!

A look at the coming MPAI-HMC new standard

At its 40th General Assembly (MPAI-40), MPAI approved one new standard, three extension standards and a draft standard posted for Community Comments – Human and Machine Communication (MPAI-HMC). This does not specify new technologies but leverages those from existing standards: Context-based Audio Enhancement (MPAI-CAE), Multimodal Conversation (MPAI-MMC), the newly approved Object and Scene Description (MPAI-OSD), and Portable Avatar Format (MPAI-PAF).

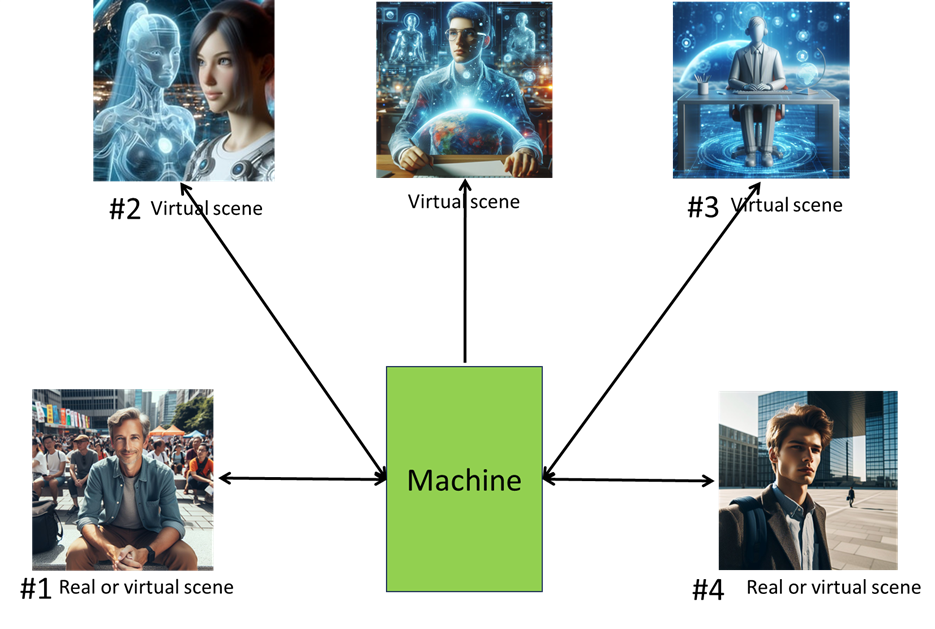

If not new technologies, what does MPAI-HMC specify then? To answer this question let’s consider Figure 1.

Figure 1: Communications supported by MPAI-HMC

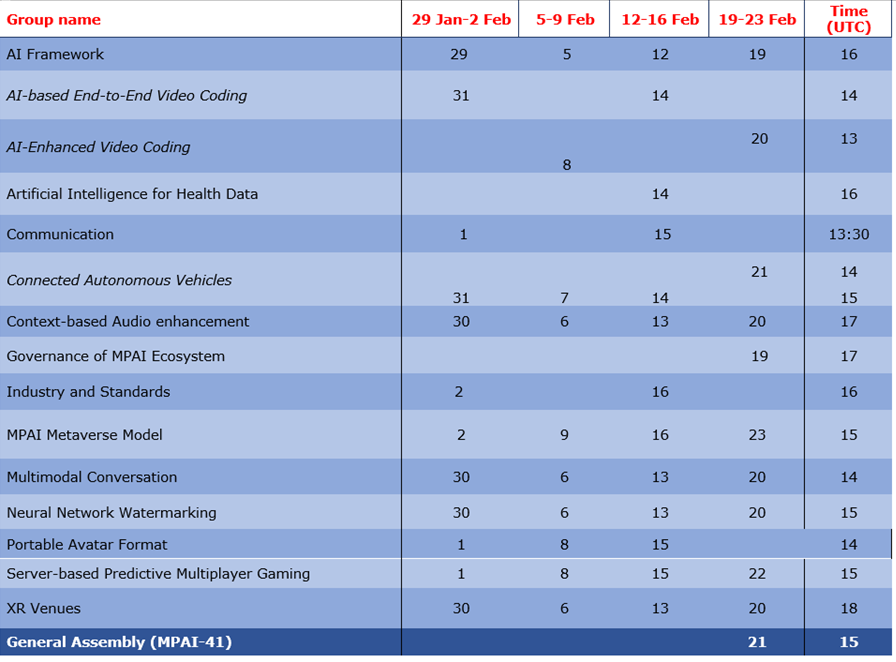

Meetings in the coming January-February meeting cycle

Participation in meetings indicated in normal font are open to MPAI members only. Legal entities and representatives of academic departments supporting the MPAI mission and able to contribute to the development of standards for the efficient use of data can become MPAI members.

Meetings in italic are open to non-members. If you wish to attend, send an email to secretariat@mpai.community.

This newsletter serves the purpose of keeping the expanding diverse MPAI community connected.

We are keen to hear from you, so don’t hesitate to give us your feedback.