1 Definitions

| Term | Definition |

| RTW-Key | The secret matrix to project the parameter of a randomly selected layer. |

| Bit Error Rate | (BER) is the number of bit errors divided by the total number of inserted bits |

2 Description of the watermarking procedure

This subsection describes the Regularization term watermarking (RTW) procedure. A detailed description is provided by [8].

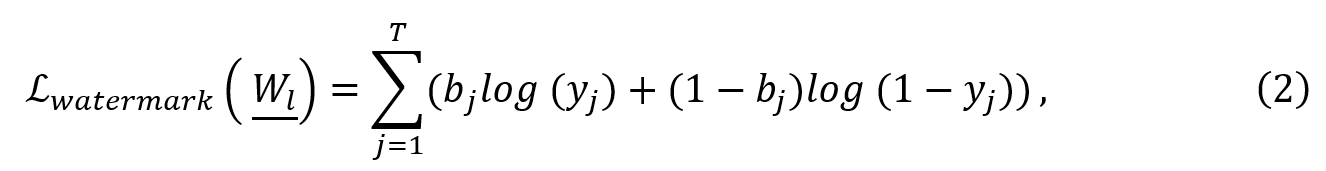

The RTW-Key is a random matrix X ∈ RMxT, with the output dimension of the flattened layer Wl (defined hereafter). X can be initialized with samples from a binary distribution (either {0,1}, or {-1,1}) or with samples from a normal distribution . The mark insertion is achieved by a regularization term that is added to the original cost function to minimize the distance between the watermark and the sigmoid of the projection of a flattened version of the weights Wl on X:

with , and Wl ∈ RM is obtained by taking the average of -th layer according to its 1st dimension. The regularization term is multiplied by an adjustable parameter h.

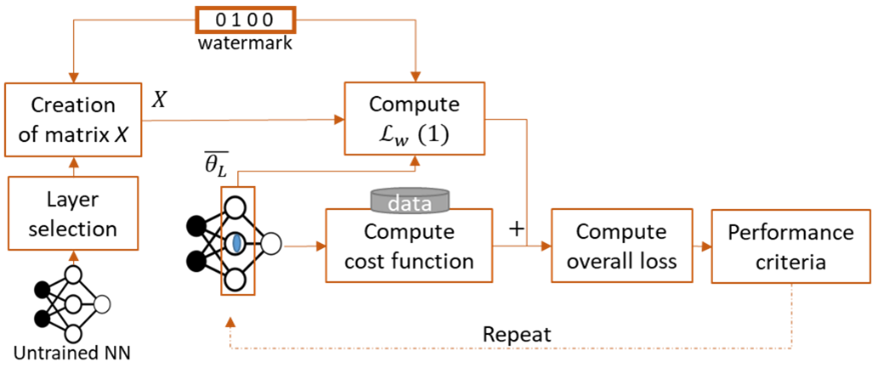

To detect the watermark, the flattened watermarked layer is projected on the RTW-key, the obtained values are binarized (through a sigmoid and a rounding operations) and the BER is finally computed. The workflow is illustrated in Figure 6.

Figure 6. Illustration of the watermarking steps for RTW

3 Experimental Conditions

For performance evaluation, the models, the datasets and the application domains mentioned in subsection 6.1 and the three evaluation types presented in subsection 6.2 are used.

The h parameter is set to 0.01 as in [7].

4 Evaluation of Imperceptibility impact

Table 12 reports the imperceptibility of RTW applied to 5 watermarked NNs.

The impact of different h is evaluated for the image classification task. The experimental results are obtained using the experimental conditions of section 6. Each row provides the Impact of the Tracking procedure on the performance of the Neural Network and the Bit Error Rate (BER) for a given configuration. Impact is defined as:

Impact= |Performanceuwm– Performancewm|/Performanceuwm

where Performance is one of Top-1 Accuracy, PSNR, SSIM, mIoU (depending on the task) presented in subsection 6.1. Impact will be multiplied by 100 to be read as a percentage. The indices uwm and wm stand for the unwatermarked and the watermark models, respectively.

Table 12 presents the impact of the inserted watermark on VGG16 and ResNet8 models. For all given configurations, the watermark is successfully retrieved at 5% significance level based on the Spearman correlation.

Table 12. Imperceptibility evaluation for image classification task.

| Configuration | Impact | Extracted mark | ||

| Model | h | Top-1 accuracy | BER | |

| VGG16 | 0.001 | 5 | 0.14 | |

| 0.01 | 2 | 0 | ||

| 0.1 | 5 | 0 | ||

| 1 | 11 | 0 | ||

| ResNet8 | 0.001 | 7 | 0.25 | |

| 0.01 | 5 | 0 | ||

| 0.1 | 6 | 0 | ||

| 1 | 7 | 0 | ||

5 Evaluation of Robustness impact

This subsection provides the result of RTW Robustness against Gaussian noise addition, fine-tuning, pruning, quantization, and Watermark Overwriting. For those experiment is fixed to 0.01.

The following Table 13 provides the robustness evaluation against Gaussian noise addition modification, for the above-mentioned NN models. Each row in both tables provides the relative error compared to the un-modified model and the computed BER for a given attack, in a similar way as in equation 1. The modification compresses the model by adding a Gaussian noise of a zero-mean, and the ratio S ∈ {.001,.005,.01,.05,.1,.5,1} defined as in the Modification table of [2] to all layers.

The values in Table 13 shows that the watermark is successfully retrieved (BER = 0).

Table 13. Robustness of RTW against Gaussian noise addition for the VGG16 model and ResNet8.

| S | ||||

| BER | error | BER | error | |

| .001 | 0 | 0 | 0 | 0 |

| .005 | 0 | 0 | 0 | 0.3 |

| .01 | 0 | 0 | 0 | 0.1 |

| .05 | 0 | 0.1 | 0 | 3 |

| .1 | 0 | 1.7 | 0 | 19 |

| .5 | 0 | 67 | 0 | 240 |

| 1 | 0 | 407 | 0 | 354 |

The following tables Table 14 provide the robustness evaluation against fine-tuning modification, for the above-mentioned NN models. Each row in both tables provides the relative error compared to the un-modified model and the computed correlation (corr) for a given attack. The modification resumes the training for E ∈ [1,10] additional epochs.

The values of Table 14 shows that the watermark is successfully retrieved (BER = 0).

Table 14. Robustness of RTW against fine-tuning for the VGG16 model and ResNet8.

| E | ||||

| BER | error | BER | error | |

| 1 | 0 | 0 | 0 | 0 |

| 3 | 0 | 0 | 0 | 0 |

| 5 | 0 | 0 | 0 | 0 |

| 7 | 0 | 0 | 0 | 0 |

| 10 | 0 | 0 | 0 | 0 |

The following tables Table 15 provides the robustness evaluation against quantization modification, for the above-mentioned NN models. Each row in both tables provides the relative error compared to the un-modified model and the computed correlation (corr) for a given attack. The modification compresses the model by reducing the number of bits B ∈ [2,16] of the floating representation of the parameters.

The values of Table 15 shows that the watermark is successfully retrieved (BER = 0).

Table 15. Robustness of RTW against quantization for the VGG16 model and ResNet8.

| B | ||||

| BER | error | BER | error | |

| 16 | 0 | 0.4 | 0 | 0 |

| 8 | 0 | 0.7 | 0 | 0 |

| 6 | 0 | 1.3 | 0 | 1.7 |

| 4 | 0 | 9.4 | 0 | 13 |

| 2 | 0 | 765 | 0 | 345 |

The following tables Table 16 provides the robustness results against the pruning modification. Each row in such a table provides the relative error compared to the un-modified model and the computed correlation (corr) for a given attack. The modification sets to zero a percentage P ∈ [10,90] of the parameters having the smallest absolute values, as described in the Modification table of [2].

The values of Table 16 shows that the watermark is successfully retrieved (BER = 0).

Table 16. Robustness of RTW against magnitude pruning for the VGG16 model and ResNet8.

| P | ||||

| BER | error | BER | error | |

| 10 | 0 | 0 | 0 | 0 |

| 20 | 0 | 0.5 | 0 | 3.1 |

| 50 | 0 | 10.9 | 0 | 73 |

| 80 | 0 | 476 | 0 | 335 |

| 90 | 0 | 763 | 0 | 372 |

6 Evaluation of Computational cost

For the injection phase, the RTW method does not impact the memory footprint. However, the insertion procedure is applied during the training of the model:

- In average the mark is inserted after 500 batch iterations.

- In average the training time has been increased by 16.67%.

The detection phase involves projecting on RTW-Key the values located at the given watermarked layer and rounding their values.