| 1 Introduction

2 The A‑User Architecture Reference Model |

9 Prompt Creation (PRC)

12 User State Refinement (USR) |

1 Introduction

Technical Specification: Pursuing Goals in metaverse (MPAI-PGM) – Autonomous User Architecture (PGM-AUA) specifies the architecture, the functions, and the interfaces by which an Autonomous User (A-User) operating in a metaverse instance (M-Instance), i.e., a type of Process specified by Technical Specification: MPAI Metaverse Model (MPAI-MMM) – Technologies (MMM‑TEC) operating under the responsibility of a human as an operational mediator between a responsible human and the M‑Instance but with considerable or complete autonomy, interacts with another User in the same or another M-Instance. The latter User may also be an A-User or may be under the direct control of a human and is thus called a Human-User (H-User).

The A-User performs the following typical functions:

- Captures text and audio-visual information originated by, or surrounding, the User it interacts with.

- Extracts the User’s Entity State, i.e., a snapshot of the User’s cognitive, emotional, and interactional state.

- Produce an appropriate multimodal response, rendered as a Speaking Avatar.

- Performs Actions as specified by the MMM-TEC standard.

The A‑User exhibits a degree of autonomy determined by the extent of human intervention.

Internally, an A‑User:

- Perceives multimodal signals produced by:

- The responsible human in the universe, and

- A User in the M‑Instance, which may be:

- Another A‑User, or

- An H‑User, i.e., a Process directly operated by a human.

- Interprets the human’s or User’s Goal through knowledge‑oriented prompting and multimodal clarification.

- Validates the interpreted Intention against applicable M‑Instance Rules and the A‑User’s Rights.

- Constructs and executes a plan determining the Actions or Process Actions to be performed by in the M‑Instance.

- Monitors logical, contextual, or governance conflicts and either resolves them or escalates them to the responsible human when they cannot be resolved autonomously.

Externally, an A‑User performs Actions and Process Actions in an M‑Instance as specified by MMM‑TEC.

2 The A‑User Architecture Reference Model

The A-User:

- Operates as an instruction‑driven system composed of a plurality of interacting sub‑processes, orchestrated by a central controller.

- The sub-processes are implemented as AI Modules (AIM) organised in an AI Workflow (AIW) executed in an AI Framework (AIF) according to Technical Specification: AI Framework (MPAI-AIF) V3.0.

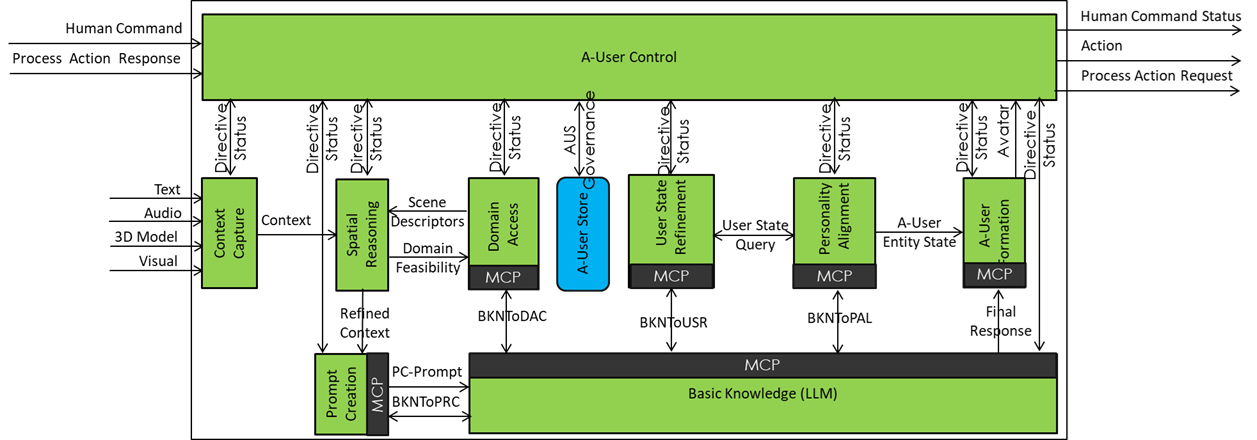

Figure 1 provides the Reference Model of the A-User Architecture.

Figure 1 – Autonomous User Architecture

3 Types of AI Modules

The A-User’s AIMs belong to the following classes:

(1) Control AIMs

- A-User Control (AUC)

- Governs the runtime operation of the A‑User.

- Issues Directive messages to AIMs.

- Receives Status Messages from AIMs.

(2) Perception AIMs

- Context Capture (CXC)

- Captures media data (Text, Audio, Visual, and 3D Model) from an M-Location,

- Produces snapshots of the Audio and Visual Scene Descriptors (ASD0 and VSD0).

- Extracts the initial User Entity State UES0 from ASD0 and VSD0.

- Multiplexes ASD0 and VSD0 and UES0 into Context sent to Audio Spatial Reasoning (ASR) and Visual Spatial Reasoning (VSR).

- Spatial and User Reasoning (SUR)

- Enhances the Audio Scene Descriptors of the scene producing ASD1.

- Performs segmentation and speech recognition.

- Enhances the Visual Scene Descriptors of the scene producing VSD1.

- Performs object, region, and motion analysis to extract spatial and geometric relationships.

- Enhances the User Entity State producing USE1.

- Gets Domain information from DAC.

(3) Interpretation AIMs

- Prompt Creation (PRC)

- Computes and assembles Raw Goal Expression (RGE) from UES0 and Context Perceptual Semantics (CPS) from ASD1 and VSD1 as PRC Prompt.

- Provides PRC Prompt as an interpreted input to Basic Knowledge (BKN).

- Domain Access (DAC)

- Provides Domain semantics to ASR, and VSR for grounding, validation, and feasibility assessment.

- Basic Knowledge (BKN)

- Performs semantic interpretation and reasoning by engaging PRC, DAC, User State Refinement (USR), and Personality Alignment (PAL) through MCP‑based semantic interactions under AUC

- Provides an interpreted, execution‑ready outcome (Final Response) to A‑User Formation (AUF).

(4) Semantic Provider AIMs

- Prompt Creation (PRC)

- Initiates and participates in MCP‑based semantic interactions when instructed by AUC.

- Provides assembled and aligned semantic artefacts (e.g. PRC Prompt components) to BKN for reasoning.

- Domain Access (DAC)

- Supplies domain constraints, object classes, relations, and affordances.

- User State Refinement (USR)

- Constructs an authoritative but ephemeral User Entity State by integrating evidence from A-User Store (AUS) and M-Instance services.

- Supplies the User Entity State to BKN for reasoning and to PAL for alignment of A-User personality with User’s.

- Personality Alignment (PAL)

- Constructs an ephemeral A‑User Entity State by aligning A-User’s Personality with the User Entity State and current context.

- Provides the A‑User Entity State to BKN and A‑User Formation (AUF) to govern behaviour and expression.

(5) Execution AIMs

- A‑User Formation (AUF)

- Realises communicative intent and User Persona’s multimodal output in accordance with the A‑User Entity State.

- Produces speech, gesture, facial expression, gaze, and body motion as required by the Final Response.

- Executes behaviour under AUC governance without performing semantic interpretation or reasoning.

4 Interaction Paradigms between AIMs

The A‑User Architecture AIMs exchange o semantic information using two interaction paradigms. These differ in terms of determinism, statefulness, and the need for clarification or iterative refinement. The selection of an interaction paradigm is constrained by the execution mode (reactive or deliberative) under which the A‑User is operating, as defined in this chapter.

4.1 Directed Semantic Query Interaction

Directed Semantic Query Interaction (DSQI) is a lightweight, deterministic interaction paradigm used for single‑shot semantic grounding performed by an AIM by issuing a structured, typed request and receiving a structured, typed response. Each interaction is self‑contained and stateless, with no memory of previous exchanges and no clarification loops involving follow‑up questions or iterative refinement of meaning. DSQI is used when:

- The requesting AIM needs semantic grounding or validation.

- Ambiguity resolution is limited and local.

- The result does not require conversational refinement.

Typical examples include:

- ASR querying DAC for sound‑source classification or model selection,

- VSR querying DAC for object category validation or expected behaviour,

- Perception AIMs retrieving domain ontology constraints to guide or validate perceptual interpretation.

4.2 Dialogic Semantic Interaction (MCP)

Dialogic Semantic Interaction (DSI) uses the Multi Call Protocol (MCP) to support semantic exchanges that require clarification, negotiation, or incremental refinement across multiple turns. DSI supports session‑level continuity, whereby intermediate results, assumptions, and bindings are preserved and reused across successive exchanges within the same reasoning session. DSI is used when:

- Semantics must be negotiated rather than retrieved.

- Interpretations depend on context evolution.

- Multiple semantic providers must be consulted through multi‑turn semantic exchanges.

Typical examples include BKN querying:

- PRC for aligned perceptual and User‑related semantics.

- DAC for Domain semantics.

- USR and PAL for User Entity State and A‑User Entity State information.

4.3 Reactive vs Deliberative Execution

To ensure high responsiveness while preserving semantic richness, the A‑User Architecture distinguishes between reactive execution and deliberative execution. The execution modes determine whether semantic interactions are limited to previously produced artefacts or may involve dialogic semantic interactions requiring session‑level continuity.

Reactive execution supports low‑latency, time‑critical A‑User behaviour. It enables immediate A‑User responses based exclusively on previously produced semantic artefacts, including ASD1 and VSD1 (perceptual descriptors), the User Entity State (UES), the derived A‑User Entity State (AES):

- Operates without initiating MCP interactions.

- Relies on cached or last‑known semantic artefacts.

- Enables rapid conversational turn‑taking, micro‑behaviours (e.g. Gaze, posture, acknowledgements), and provisional responses.

- Is governed by AUC) and executed by AUF without blocking on semantic clarification.

Reactive execution is the default mode for time‑critical A‑User behaviour.

Deliberative execution supports semantic interpretation, integration, and refinement when reasoning is required:

- Uses MCP‑based dialogic semantic interactions.

- Involves multi‑step reasoning and clarification across PRC, DAC, USR, and PAL under BKN

- May incur additional latency because semantic interpretation requires multiple dependent reasoning steps whose intermediate results must be accumulated and reused within the same reasoning session.

- Produces refined goals, updates the User Entity State and the derived A‑User Entity State, and constructs plans.

The ASD1, VSD1 perceptual descriptors are not modified during deliberative execution.

Deliberative execution is initiated by AUC through the issuance of Instructions and proceeds independently of the real‑time performance of M-Instance Actions which remains supported by reactive execution.

5 A‑User Control (PGM-AUC)

The function of AUC is to obtain from the A-User’s AIMs a Persona showing an appropriate Entity State and uttering a Speech congruent with the interaction the A-User is having with the User, in accordance with human authorisation, M‑Instance Rules, and the A‑User’s Rights. To achieve this goal, AUC orchestrates the runtime operation of the A‑User by issuing Directives to AIMs that convey the component of an Instruction relevant to each AIM and by monitoring their execution through received Status messages.

The A‑User perceives and semantically interprets the interactions of its human with itself, while AUC mediates the resulting interpreted outcomes for execution, oversight, and orchestration, independently of the specific user interface employed by the human.

Human-AUC interactions may include:

- Initiation or termination of A‑User activity.

- Authorisation or denial of specific actions.

- Escalation handling when the A-User cannot resolve conflicts.

- Adjustment of autonomy level or execution constraints.

AUC controls the A‑User lifecycle by means of eight Instruction Types:

- Perception and Environment Capture: To configure the A-User’s perceptual system for sensing the human in the universe, the User in the M-Instance, or the M-Location of interest.

- Goal Acquisition: To capture, segment, and validate multimodal expressions of the human in the universe and/or the User in the M-Instance for producing a Raw Goal Expression (RGE).

- Prompting and Knowledge Queries: To assemble and align perceptual descriptors (ASD1, VSD1) and the User Entity State to construct Context Perceptual Semantics and perform prompting and clarification to semantically ground all processing steps after the Raw Goal Expression has been established.

- Goal and Intent Interpretation: To normalise, ground, and interpret the human’s or User’s Goal using Domain information and User information.

- Policy, Rights, and Feasibility: To validates the interpreted Goal against:

- Governance (M-Instance Rules and A-User’s Rights).

- User Entity State constraints, and

- Domain feasibility conditions.

- Plan Construction and Execution: To construct an action plan and orchestrate its execution.

- Conflict Management and Escalation: To detect inconsistencies or conflicts and resolve them by escalating as appropriate.

- Avatar Formation and Rendering: To produce speech and avatar‑based multimodal output.

Table 1 identifies the AIMs that should receive a Directive because of the AUC decision to issue an Instruction. A X indicates that the AIM (row) receives an Instruction (column) from the A-User Control.

Table 1 – Instruction and affected AIMs

| PEC | GLA | PKQ | GII | PRF | PCE | CME | AFR | |

| CXC | X | – | – | – | – | – | – | – |

| ASR | – | X | – | – | X | X | – | – |

| VSR | – | X | – | – | X | X | – | – |

| PRC | – | – | X | – | – | – | – | |

| BKN | – | – | – | X | X | X | X | – |

| DAC | – | X | X | X | X | – | – | – |

| USR | – | – | X | X | X | X | X | – |

| PAL | – | – | X | X | X | X | – | X |

| AUF | – | – | – | – | – | X | X | X |

Note 1: Participation of ASR and VSR in PRF and PCE reflects spatial and environmental feasibility assessment and monitoring, not semantic reasoning.

Note 2: Directive-Status interactions between AUC and AIMs do not use MCP because this is reserved exclusively for semantic interactions.

6 Context Capture (CXC)

The function of CXC is to capture and extract the context through a series of operations:

- Capture media data (Text, Audio, Visual, 3D Model) produced by the human in the Universe or by the User in the M‑Instance.

- Produce initial Audio Scene Descriptors (ASD0) and Visual Scene Descriptors (VSD0) representing the captured M‑Location prior to reasoning and Domain enhancement.

- Extract the initial User Entity State (UES0) from ASD0 and VSD0.

- Produce Context by combining Scene Descriptors and User Entity State.

- Pass Context on to Audio Spatial Reasoning (ASR), Visual Spatial Reasoning (VSR), and Prompt Creation (PRC).

7 Spatial and User Reasoning (SUR)

The function of SUR is to

- Receive

- Context from Context Capture.

- Spatial and User Directive from A-User Control.

- Feed into

- The Audio Scene Descriptors and the audio component (speech and other User‑generated sounds) of the User Entity State, and

- The Visual Scene Descriptors and the visual component of the User Entity State.

- Refine

- Audio Scene Descriptors and audio‑related aspects of User Entity State by querying Domain Access Control to obtain domain semantics relevant to:

- The spatial environment (e.g. office, workshop, kitchen),

- Speech‑related user behaviour (e.g. conversational norms, interaction roles).

- Visual Scene Descriptors and visual‑related aspects of User Entity State by querying Domain Access Control to obtain domain semantics relevant to:

- Spatial interpretation of objects and environments,

- Visual User behaviour (e.g. gesture meaning, culturally dependent movements).

- Produce Refined Context including:

- ASD1, an enhanced and semantically grounded version of ASD0.

- VSD1, an enhanced and semantically grounded version of VSD0.

- UES1, an enhanced and semantically grounded version of UES0.

- Spatial and User Status, reporting the outcome and scope of reasoning, sent to A‑User Control.

- Outputs Revised Context representing a semantically grounded and user‑aware situational representation that includes coherently interpreted and aligned

- Spatial audio and visual information,

- Audio‑ and visual‑derived components of User Entity State, and

- Domain‑specific semantics

- Audio Scene Descriptors and audio‑related aspects of User Entity State by querying Domain Access Control to obtain domain semantics relevant to:

8 Prompt Creation (PRC)

The function of PRC is to assemble, bind, and expose M-Location and User‑related semantics to BKN by structuring ASD1, VSD1, and UES0 into a prompt suitable for reasoning. PRC acts as the authoritative access point for assembled M-Location and User semantics, without performing perception or domain reasoning itself. PRC:

- Produces the Raw Goal Expression by assembling perception and interaction outputs from ASR, VSR, and CXC, expressing the User’s current objective in a structured, pre‑semantic form.

- Produces the Context Perceptual Semantics by assembling and binding M-Location and User information derived from ASD1 and VSD1.

- Constructs the PRC Prompt, including:

- The Raw Goal Expression.

- The Context Perceptual Semantics.

- References to relevant M-Location and User descriptors stored in the AUS.

- A Session ID enabling BKN to retrieve Context snapshots, User Entity State and A-User Entity State records, and Interaction History.

- Is activated by the following types of instructions:

- A Prompt Creation request issued by AUC to support reasoning or decision making.

- A request from BKN to initialise or update a reasoning session.

- A clarification or follow up request from BKN requiring additional M-Location or User-related semantics.

- A change in available perception outputs that requires the perceptual context to be re‑assembled.

- Initiates and mediates MCP sessions as required by BKN. MCP enables structured, typed semantic queries and responses, with session continuity for multi‑stage clarification exchanges.

- Provides additional perceptual and user‑related semantics to BKN during clarification loops.

- Responds to BKN queries by refining and binding multimodal information and resolving references using existing descriptors.

PRC acts as an Interpretation AIM and a Perceptual Semantic Provider AIM. It is the sole AIM responsible for assembling and binding M-Location and User semantics into a spatially and referentially coherent context for subsequent reasoning by BKN.

9 Basic Knowledge (BKN)

During a Goal and Intent Interpretation Instruction, BKN executes a multi‑loop semantic integration pipeline:

- Receives the PRC Prompt from PRC, including the Raw Goal Expression and the Context Perceptual Semantics.

- Orchestrates semantic interpretation of the Raw Goal Expression within a spatially coherent environment and User context.

- Integrates semantics from multiple sources through the following loops:

Loop 1 – Spatial and User Semantic Grounding (PRC)

BKN queries PRC via MCP to obtain the assembled and aligned M-Location and User‑related semantics relevant to the current reasoning task: the Raw Goal Expression and the Context Perceptual Semantics. BKN also retrieves supporting artefacts from AUS, including M-Location snapshots, User Entity State snapshots, A‑User Entity State records, and Interaction History.

This does three important things, namely: names Context Perceptual Semantics explicitly, keeps PRC as the semantic authority, and keeps AUS as storage, not interpretation.

Loop 2 – Domain Semantics (DAC)

BKN queries DAC via MCP to obtain domain grounding required to interpret goals, entities, and relations.

Loop 3 – User Semantics (USR)

BKN queries USR via MCP for session‑level user semantic cues. If authorised by AUC, USR integrates AUS records and User information provided by the hosting M‑Instance (such as Rights or declared preferences) to derive the best available User Entity State.

Loop 4 – Personality Alignment (PAL)

BKN queries PAL via MCP to align reasoning with the active persona. PAL may directly consult USR to obtain User‑related semantics required for Personality alignment.

Loop 5 – Personality Semantics (PAL)

BKN queries PAL for the A‑User Entity State semantic constraints. Persistent updates to User Entity States or A-User Entity States are stored in AUS under AUC governance.

10 Domain Access (DAC)

DAC provides authoritative domain‑level semantics to both capture‑level semantic grounding and deliberative reasoning. These two uses are logically separated: capture‑level domain access is situational and non‑goal‑directed, while reasoning‑level domain access is goal‑directed and deliberative.

DAC supplies object classes, relations, affordances, constraints, and behavioural expectations derived from Domain knowledge, without performing perception or reasoning itself.

- Provides Domain Semantics to ASR, VSR, PRC, USR, PAL, and BKN, including object and event classes, relations, affordances, scene constraints, and expected behaviours.

- Supports domain grounding of perceptual and linguistic elements, enabling consistent interpretation of entities, actions, and relations detected or inferred by other AIMs.

- Exposes two interaction interfaces, corresponding to the two interaction paradigms:

- A Directed Semantic Query Interface, used by spatial AIMs (e.g. ASR, VSR) for single‑shot semantic grounding and validation without session continuity.

- A Dialogic Semantic Interface based on MCP, used by reasoning and interpretation AIMs (e.g. BKN, PRC, USR, PAL) for multi‑turn semantic clarification and integration.

- Returns structured, typed domain knowledge, including confidence and constraint information, suitable for direct consumption by perception pipelines or for semantic reasoning via MCP.

- Does not maintain perception state, user state, or session state beyond that required for MCP interactions, and does not modify perceptual or user descriptors produced by other AIMs.

11 User State Refinement (USR)

USR acts as the semantic authority for User modelling, transforming distributed internal and external User‑related evidence into a coherent, queryable user semantic representation for reasoning and Personality alignment. It derives and provides authoritative, session‑level User semantics by consolidating user‑related evidence originating from both internal AIMs and M-Instance services.

- Consumes User‑related evidence from AUS, including:

- User Entity State snapshots produced by CXC.

- User‑related interpretations generated by perception and interpretation AIMs (e.g. ASR, VSR, PRC),

- Interaction history and previously authorised User‑related records.

- Draws additional user‑related information from authorised M-Instance services, when available and permitted by AUC, such as:

- Identity and role information from Interpret or Identity services.

- Persistent and ephemeral User profile attributes managed by the M-Instance.

- Refines the User Entity State by integrating behavioural cues, interaction patterns, session‑level preferences, historical fragments, and User information from the M-Instance, producing an authoritative but ephemeral User Entity State valid for the current reasoning context.

- Integrates profile fragments from AUS and, when authorised by AUC, from M‑Instance services, without recording inferred User Entity State unless explicitly permitted.

- Provides User Entity State information to BKN that is relevant to interpreting the current Raw Goal Expression being processed in the ongoing Goal and Intent Interpretation Instruction.

- Provides User Entity State to PAL upon request, enabling Personality alignment and derivation of the A‑User Entity State.

- Does not modify User Entity State snapshots produced by CXC and does not autonomously keep user semantic interpretations; persistence of User‑related data is governed by AUC and managed through AUS.

12 Personality Alignment (PAL)

PAL acts as the semantic authority for Personality alignment, ensuring that reasoning and responses are consistent with the active Personality while remaining grounded in authoritative User semantics. It derives and provides A-User Entity State by aligning User semantics with A-User Personality, and communicative and expressive constraints. It constructs the A‑User Entity State as an authoritative but ephemeral Personality‑aligned semantic state, without performing perception or direct user modelling.

- Consumes User semantics from USR, including the authoritative, session‑level User Entity State required to perform Personality

- Draws Personality‑related evidence from AUS, including previously authorised A‑User Entity State fragments, Personality preferences, and interaction‑derived Personality

- May draw additional Personality‑related information from M-Instance services, when permitted by AUC, such as externally defined Personality profiles, role‑ or context‑specific Personality constraints from the M-Instance, and policy‑driven expressive or behavioural guidelines.

- Constructs and refines the A‑User Entity State by integrating Personality traits, communicative modulation rules, expressive and behavioural constraints, User‑specific adaptations derived from USR.

The resulting A-User Entity State is session‑scoped and context‑dependent.

13 Avatar Formation (AUF)

AUF realises the externally perceptible behaviour of the A‑User in the M-Instance. It transforms reasoning outcomes from BKN and Personality‑aligned plans into synchronised multimodal avatar behaviour, without performing semantic reasoning or User modelling.

- Consumes execution plans and communicative intent produced by BKN, including linguistic, expressive, and behavioural directives aligned with the current A‑User Entity State.

- Transforms communicative intent produced by BKN into natural language text, consistent with A-User Entity State, communicative constraints, and contextual requirements.

- Synthesises speech, including prosody and timing aligned with linguistic content and expressive intent.

- Renders facial expressions, eye gaze, and head pose consistent with communicative and emotional modulation.

- Produces gestures and full‑body avatar motion, including posture and movement, aligned with speech and interaction context.

- Synchronises multimodal outputs across text, speech, facial animation, gaze, and body motion to ensure coherent and natural avatar behaviour.

- Executes the final plan steps as observable Persona Actions within the M-Instance.

- Emits execution status to AUC, reporting progress, completion, or execution anomalies related to avatar behaviour.

14 A‑User Store (AUS)

AUS acts as a governed evidence and artefact store of the A‑User Architecture. It records and exposes authorised artefacts related to perception, User evidence, Personality adaptations, and Interaction History, under the control of AUC. AUS is not an AIM but a functionality of the AI Framework.

14.1 Governance

AUC is the sole authority governing access to AUS authorising:

- Which AIMs may write to AUS,

- Which categories of information may be recorded,

- Which persistence (temporary, session‑bound, or longer‑term) to apply,

- Which AIMs may read specific stored artefacts

and enforcing these policies.

14.2 Information Stored in AUS

AUS may store, subject to AUC authorisation:

- Context snapshots produced by CXC.

- Spatial descriptors produced by perception AIMs.

- User‑related evidence and authorised fragments derived from interactions.

- Personality‑related fragments and authorised adaptations.

- Interaction History and session metadata,

- Trace metadata associated with stored artefacts.

14.3 AIM Write Access

The following AIMs may write to AUS under explicit AUC authorisation:

- CXC writes context snapshots (VSD0, ASD0, UES0).

- ASR/VSR write spatial descriptors (ASD1, VSD1) and associated metadata.

- PRC may record prompt‑related artefacts, references, and session metadata when required.

- USR may record authorised User‑related evidence fragments or updates but does not store the authoritative User Entity State unless explicitly authorised by AUC.

- PAL may record authorised A-User Entity State fragments but does not autonomously record Personality‑derived semantics.

- BKN may record authorised reasoning outcomes, annotations, or decisions as structured, machine‑readable artefacts when required for orchestration or audit.

14.4 AIM Read Access

The following AIMs may read from AUS, subject to AUC policy:

- PRC reads spatial descriptors, context snapshots, User‑related evidence, and Interaction History to assemble perceptual semantics.

- USR reads User‑related evidence, Interaction History, and authorised profile fragments to construct the authoritative but ephemeral User Entity State.

- PAL reads authorised User semantics and Personality‑related fragments to construct the A‑User Entity State.

- BKN reads authorised artefacts to support reasoning, traceability, and semantic integration.

- AUC reads all artefacts for orchestration, governance, and lifecycle management.