| Function | Ref. Model | I/O Data | SubAIMs | JSON MData | Profiles | Ref. Software | Conformance | Performance |

1 Functions

The A‑User Control (AUC) AIM:

- Serves as the central coordinator for Action execution, AIM orchestration, and system traceability.

- Governs the lifecycle of the A-User.

- Orchestrates the A-User interaction with

- The human User.

- The M-Instance.

- The M-Instance’s Processes and Items with which the A-User interacts.

- Sends Directive messages to AIMs to implement Instructions within the Rights the A‑User holds and the Rules applicable to the M‑Location.

- Tracks execution of Directives using Status messages received from A-User AIMs.

The resulting control flow ensures that the A-User operates predictably, transparently, and in alignment with human Commands, and any A-User Instructions, thus supporting life cycle integrity and enabling trust through auditable orchestration.

2. Reference Model

A-User Control:

- Triggers the Context Capture AIM to perceive the current M‑Location composed of a User in an M-Location.

- Understands scene by sending Directives to Context Enhancement, and Domain Access.

- Prompts Prompt Creation and the Basic Knowledge.

- Controls the queries made by the Basic Knowledge to Prompt Creation, Domain Access, and User State Refinement.

- Triggers Basic Knowledge into requesting A-User Entity State (Personality Alignment).

- Issues AUF Directives to the A‑User Formation AIM to produce the speaking Avatar (Persona), which will subsequently be instantiated by the A-User Control in the M-Instance.

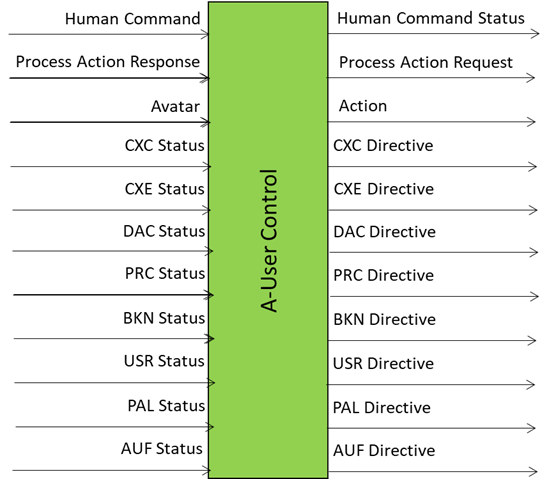

Figure 1 gives the input/output data of A-User Control (PGM-AUC).

Figure 1 – Reference Model of A-User Control (PGM-AUC) AIM

The A-User Control AIM exercises its activity by implementing one of eight Instructions:

- Perception and Environment Capture (PEC): Configure perceptual subsystems to sense the responsible human in the Universe, the User in the M‑Instance, or the contents of the relevant M‑Location.

- Goal and Language Acquisition (GLA): Capture and segment multi-modal expressions of the responsible human and/or the User. This corresponds to giving identity and spatial relationship to the objects in the scene and to understanding the User.

- Prompting and Knowledge Query (PKQ): Enable structured contextual representation and semantic grounding of perceptual and state information. The A-User is now in a position to make sense of what it perceives and interprets.

- Goal and Intent Interpretation (GII): Based upon the results of the previous Instruction, trigger deliberative processing to determine the appropriate communicative behaviour of the A‑User. The A-User Control is now able to decide the stance it should take.

- Policy, Rights, and Feasibility (PRF): Validate intended behaviour with respect to governance Rules, User Entity State constraints, human commands, and domain feasibility conditions. The A-User Control knows that the A-User actions must comply with a variety of constraints.

- Plan Construction and Execution (PCE): Orchestrate execution of the behaviour based on Statuses reported by the AIMs, including speech and actions. The A-User Control is now able to executed the actions after taking constraints into accounts.

- Conflict Management and Escalation (CME): Detect unresolved inconsistencies or conflicts and escalate to the responsible human when required. Various impediments may be encountered and modifications to the action decided.

- Avatar Formation and Rendering (AFR): Enable synthesis and rendering of the speaking avatar. The A-User’s Avatar is formed and can be rendered.

3. Input/Output Data

The A‑User Control (PGM-AUC) AIM exchanges data types with specific purposes with the other A-User AIMs. For example, Audio Reasoning Directive is sent to Audio Spatial Reasoning and Formation Status is received from A‑User Formation.

Table 1 gives Input and Output Data of A-User Control (PGM-AUC) AIM. See below for Mapping to Unified Schema.

Table 1 – Input and Output Data of A-User Control (PGM-AUC) AIM

| Input | Description |

| Human Command | From a human in the real world. |

| Process Action Response | From a Process that has received a Process Action Request. |

| CXC Status | Scene-level context and User presence. |

| CXE Status | Context Enhancement spatial feasibility, occlusion, reachability flags, |

| PRC Status | Prompt readiness, alignment status, semantic goal framing, etc. |

| BKN Trace | Enriched response metadata and traceability, etc. |

| DAC Status | Execution feasibility and constraint validation, etc. |

| USR Status | Current engagement, affective tone, override flags. |

| PAL Status | Expressive alignment, persona framing, modulation constraints, etc. |

| AUF Status | Avatar formation success, avatar state, expressive output status. |

| Output | Description |

| Action | Performed by A-User on the M-Instance. |

| Process Action Request | Request made by A-User to an M-Instance Process. |

| CXC Directive | Instructions for perceptual acquisition. |

| CXE Directive | Context Enhancement-related actions and sequences. |

| PRC Directive | Prompt generation or refinement. |

| BKN Directive | Request for knowledge retrieval or response shaping. |

| DAC Directive | Request for domain execution. |

| USR Directive | Request to modulate User State based on interaction feedback. |

| PALDirective | Request for expressive modulation or Personality reconfiguration. |

| AUF Directive | Request for avatar formation, spatial output, expressive delivery, etc. |

| Human Command Status | A-User Control response to Human Command. |

4. SubAIMs

No SubAIMs.

5. JSON Metadata

https://schemas.mpai.community/PGM1/V1.0/data/AUserControl.json

6. Profiles

No Profiles.