MPAI applies AI to Server-based Predictive Multiplayer Gaming

Geneva, Switzerland – 22nd January 2025. MPAI – Moving Picture, Audio and Data Coding by Artificial Intelligence – the international, non-profit, unaffiliated organisation developing AI-based data coding standards – has concluded its 52nd General Assembly (MPAI-52) approving publication of Technical Report: Server-based Predictive Multiplayer Gaming (MPAI-SPG) – Mitigation of Data Loss effects (SPG-MDL) V1.0.

Technical Report: Server-based Predictive Multiplayer Gaming (MPAI-SPG) – Mitigation of Data Loss effects (SPG-MDL) V1.0 addresses the effect of controller data latency in online multiplayer gaming. When controller data from a player does not reach the server on time, the server is unable to update and distribute a correct game state. The Technical Report provides guidelines on the design and use of Neural Networks that produce reliable and accurate predictions making up for the absence of players’ control data in multiplayer gaming contexts based on authoritative servers. An example Reference Software allows experimenters to test the suggested guidelines in a practical case.

MPAI will make an online presentation of the main results of the SPG-MDL V1.0 Technical Report on 12th of February 2025 at 15 UTC. Register at https://encr.pw/CzO72 to attend.

See also the MPAI presentation to LA SIGGRAPH by Leonardo Chiariglione, Marina Bosi (Six Degrees of Freedom Audio), Andrea Bottino (MPAI Metaverse Model), Mark Seligman (Multimodal Conversation), and Ed Lantz (XR Venues)

MPAI is continuing its work plan that involves the following activities:

- AI Framework (MPAI-AIF): building a community of MPAI-AIF-based implementers.

- AI for Health (MPAI-AIH): developing the specification of a system enabling clients to improve models processing health data and federated learning to share the training.

- Context-based Audio Enhancement (CAE-DC): developing the Audio Six Degrees of Freedom (CAE-6DF) standard.

- Connected Aonomous Vehicle (MPAI-CAV): updating the MPAI-CAV Architecture part and developing the new MPAI-CAV Technologies (CAV-TEC) part of the standard.

- Compression and Understanding of Industrial Data (MPAI-CUI): waiting for responses on 11 February.

- End-to-End Video Coding (MPAI-EEV): exploring the potential of video coding using AI-based End-to-End Video coding.

- AI-Enhanced Video Coding (MPAI-EVC): waiting for responses to the Call for Technologies for video up-sampling filter on 11 February.

- Governance of the MPAI Ecosystem (MPAI-GME): working on version 2.0 of the Specification.

- Human and Machine Communication (MPAI-HMC): developing reference software and performance assessment.

- Multimodal Conversation (MPAI-MMC): Developing technologies for more Natural-Language-based user interfaces capable of handling more complex questions.

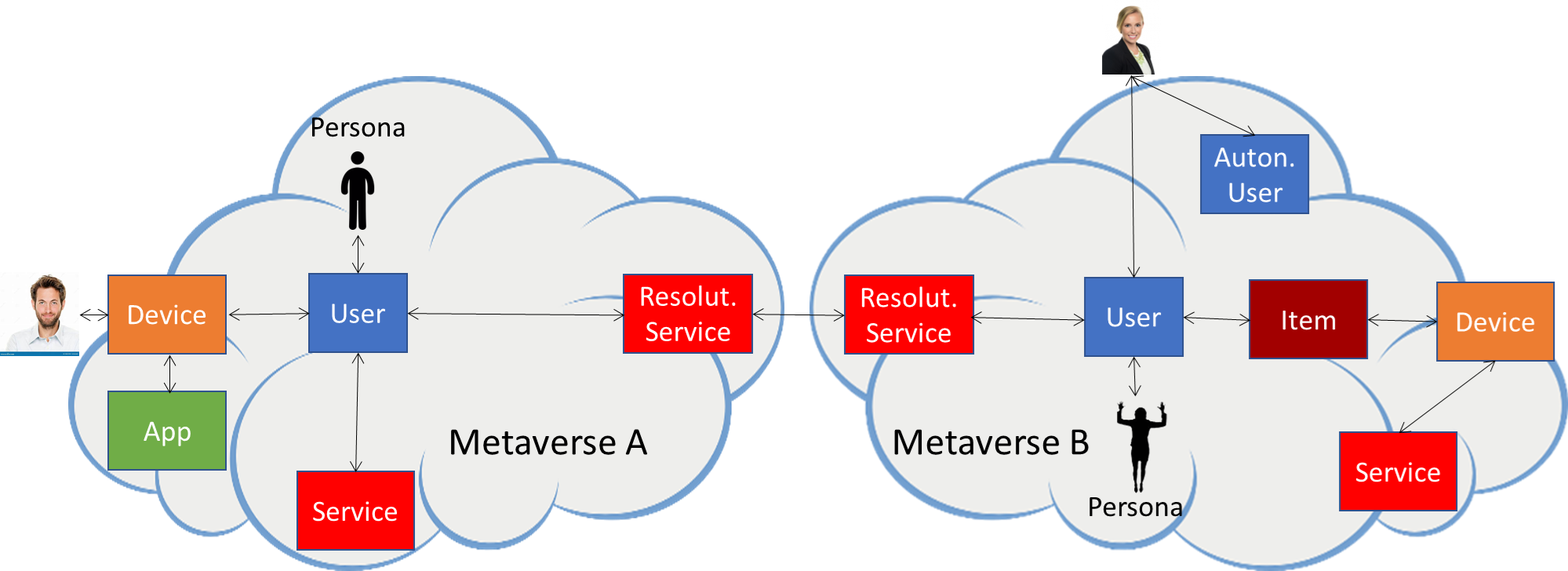

- MPAI Metaverse Model (MPAI-MMM): extending the MPAI-MMM specs to support more applications.

- Neural Network Watermarking (MPAI-NNW): studying the use of fingerprinting as a technology for neural network traceability.

- Object and Scene Description (MPAI-PAF): studying applications requiring more space-time handling applications.

- Portable Avatar Format (MPAI-PAF): studying more applications using digital humans needing new technologies.

- AI Module Profiles (MPAI-PRF): specifying which features AI Workflow or more AI Modules need to support.

- Server-based Predictive Multiplayer Gaming (MPAI-SPG): developing technical report on mitigation of data loss.

- Data Types, Formats, and Attribes (MPAI-TFA) extending the standard to data types used by MPAI standards (e.g., aomotive and health).

- XR Venues (MPAI-XRV): developing the standard for improved development and execion of Live Theatrical Performances and studying the prospects of Collaborative Immersive Laboratories.

Legal entities and representatives of academic departments supporting the MPAI mission and able to contribute to the development of standards for the efficient use of data can become MPAI members.

Please visit the MPAI website, contact the MPAI secretariat for specific information, subscribe to the MPAI Newsletter and follow MPAI on social media: LinkedIn, Twitter, Facebook, Instagram, and Yoube.