MPAI Presents the Call for Technologies on “Connected Autonomous Vehicle”

The Call for Technologies on “Connected Autonomous Vehicle”

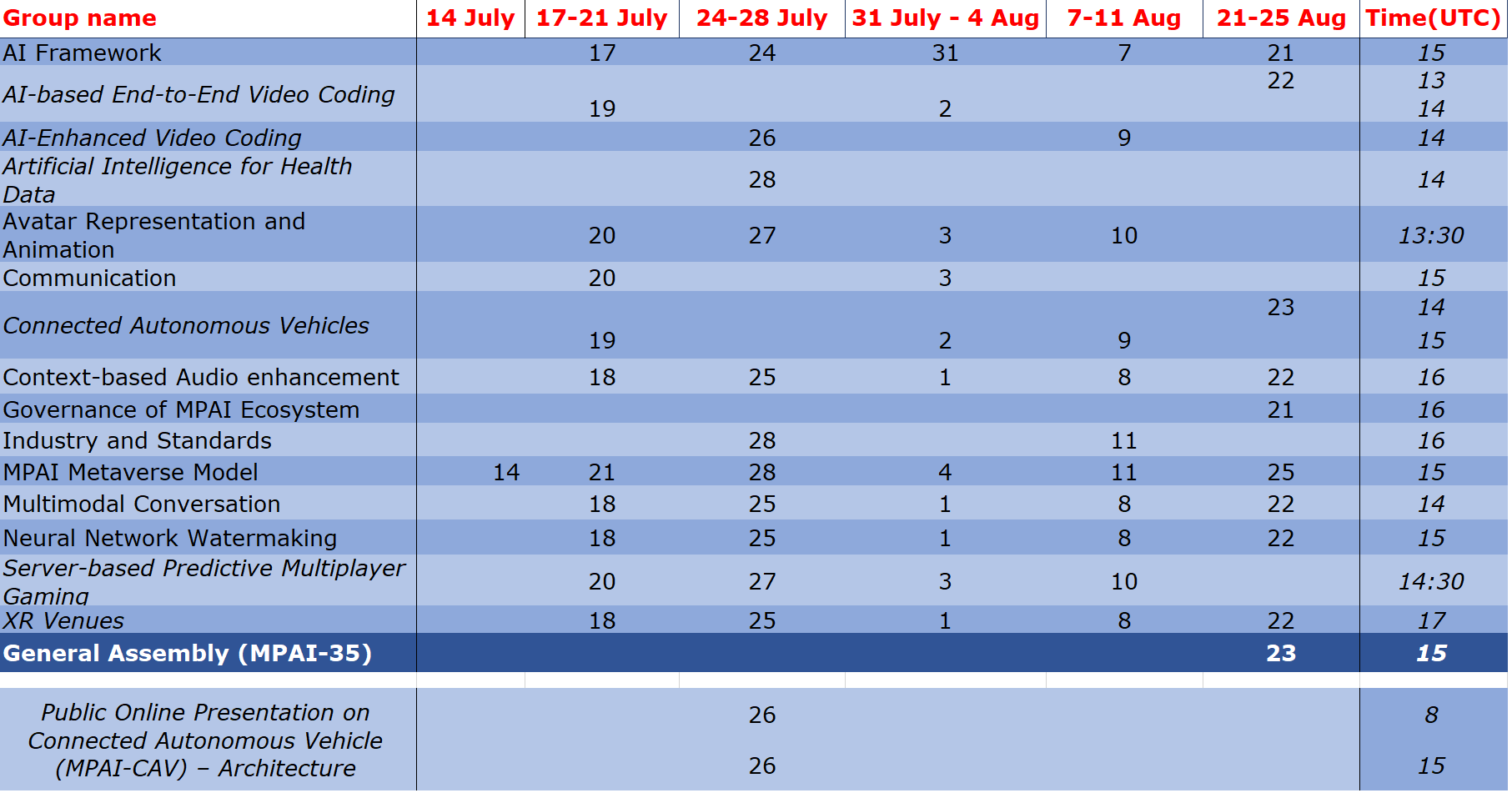

Meetings in the coming July-August meeting cycle

MPAI Presents the Call for Technologies on “Connected Autonomous Vehicle”

At its 34th General Assembly, MPAI has issued a Call for Technologies for one of its earliest MPAI projects “Connected Autonomous Vehicle (MPAI-CAV)”. The goal of the project is to promote the development of the CAV industry by specifying functions and interfaces of components that can be easily integrated into larger systems. The results of the Call will be used to finalise the already quite mature baseline MPAI-CAV specification developed so far.

As the time taken – ~30 months – to reach the Call for Technologies stage demonstrates, MPAI-CAV is a challenging project. Therefore, MPAI intends to develop it as a series of standards each adding more details to strengthen interoperability of CAV components.

The first standard targeted by the Call – MPAI-CAV – Architecture – partitions a CAV into subsystems and subsystems into components. Both are identified by their function and interfaces, i.e., the data exchanged between MPAI-CAV subsystems and components.

Register now for the online presentations of the Call for Technologies of the MPAI Connected Autonomous Vehicle – Architecture project (2023/07/26 at 8 (https://l1nq.com/HXDow) and 15 UTC (https://l1nq.com/fAL4J)).

Figure 1 – A Connected Autonomous Vehicle (Freepik Image)

The Call for Technologies of “Connected Autonomous Vehicles”

What is an MPAI Connected Autonomous Vehicle (CAV)? MPAI defines a CAV as a system that:

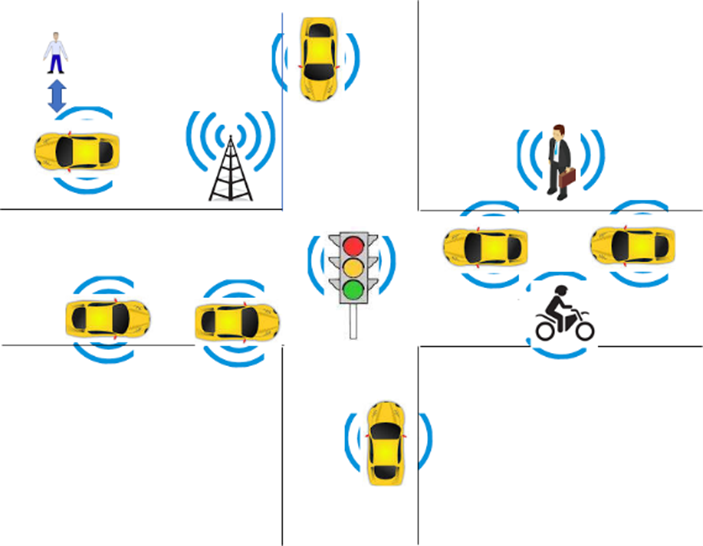

1. Moves in an environment like the one depicted in Figure 2

2. Has the capability to autonomously reach a target destination by:

2.1 Understanding human utterances, e.g., the human’s request to be taken to a certain location.

2.2 Planning a Route.

2.3 Sensing the external Environment and building Representations of it.

2.4 Exchanging such Representations and other Data with other CAVs and CAV-aware entities, such as, Roadside Units and Traffic Lights.

2.5 Making decisions about how to execute the Route.

2.6 Acting on the CAV motion actuation to implement the decisions.

Figure 2 – An environment of CAV operation

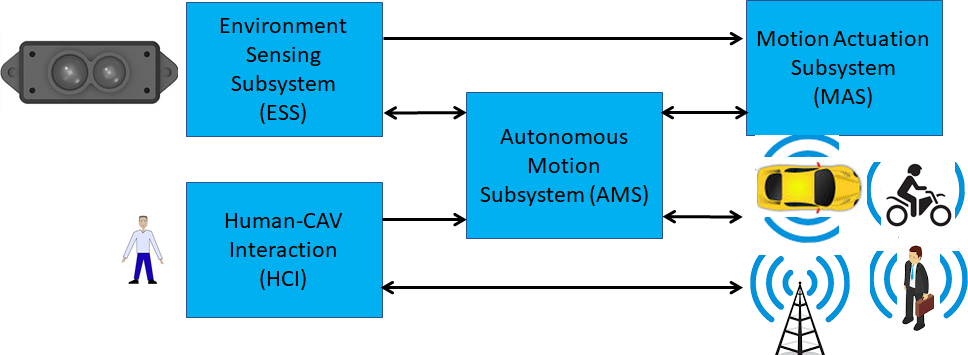

MPAI has found that a CAV can be effectively partitioned into four subsystems as depicted in Figure 3:

1.Human-CAV Interaction (HCI).

2.Environment Sensing Subsystem (ESS).

3.Autonomous Motion Subsystem (AMS).

4. Motion Actuation Subsystem (MAS).

Figure 3 – The CAV subsystems

MPAI intends to develop a series of CAV standards. The first is Connected Autonomous Vehicle – Architecture, built on the partitioning of Figure 3.

A disclaimer: MPAI does not intend to include the mechanical parts of a CAV in the planned Technical Specification. MPAI only intends to refer to the interfaces of the Motion Actuation Subsystem with such mechanical parts.

A high-level description of the functions of the fours subsystems is the following.

Human-CAV Interaction (HCI)

1.Recognises the humans having rights to the CAV.

2.Receives and passes to the AMS instructions about the target destination.

3.Interacts with humans by assuming the shape of an avatar.

4.Activate other Subsystems as required by humans.

5.Provides the Full Environment Representation received from the AMS for passengers to use.

Environment Sensing Subsystem (ESS)

1.Acquires and processes information from the Environment.

2.Produces the Basic Environment Representation.

3.Sends the Basic Environment Representation to the AMS.

Autonomous Motion Subsystem (AMS)

1.Computes the Route to destination based on information received from the HCI.

2.Receives the Basic Environment Representation of the ESS and of other CAVs in range.

3.Creates the Full Environment Representation.

4.Issues commands to the MAS to drive the CAV to the intended destination.

Motion Actuation Subsystem (MAS)

1.Sends its Spatial Attitude and other Environment information to the ESS.

2.Receives/actuates motion commands in the Environment.

3.Sends feedback to the AMS.

The MPAI-CAV – Architecture standard assumes that each of the four CAV Subsystems is an implementation of Version 2 of the MPAI AI Framework (MPAI-AIF) standard. This specifies an environment where AI Workflows (AIW) composed of AI Modules (AIM) are securely executed.

MPAI intends to specify the following elements for each subsystem in the MPAI-CAV – Architecture standard:

1.The Functions of the Subsystem.

2.The input/output data of the Subsystem.

3.The topology of the Components (AI Modules) of the Subsystem.

4.For each AI Module of the Subsystem:

4.1 The Functions.

4.2 The input/output data.

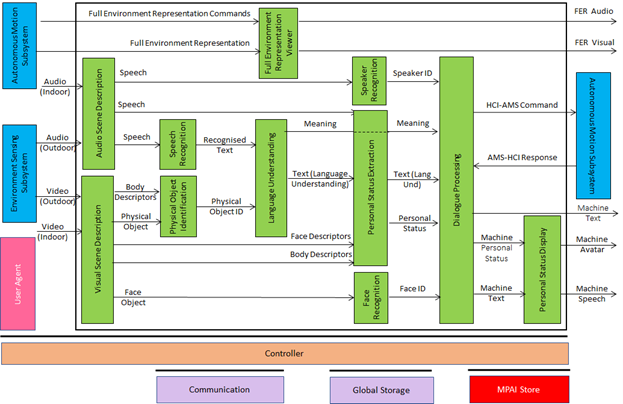

The architecture of the HCI Subsystem is given in Figure 4.

Figure 4 – Human-CAV Interaction Reference Model

HCI comprises two AIMs capturing the audio and the visual scene. The audio components (speech) are recognised and the visual components are separated in objects and human’s face and body descriptors. This information is used to 1) understand what the speaking human is saying, 2) extract the so-called Personal Status, a combination of emotion, cognitive state, and social attitude, and 3) identify the human via speech and face. The HCI subsystem has now sufficient information to respond to the human and does so by manifesting itself as a speaking avatar expressing Personal Status. The HCI may also act as the intermediary between the human and the Autonomous Motion Subsystem, e.g., by indicating their intended destination. A CAV passenger may also want to peek in and navigate the CAV’s Environment Representation.

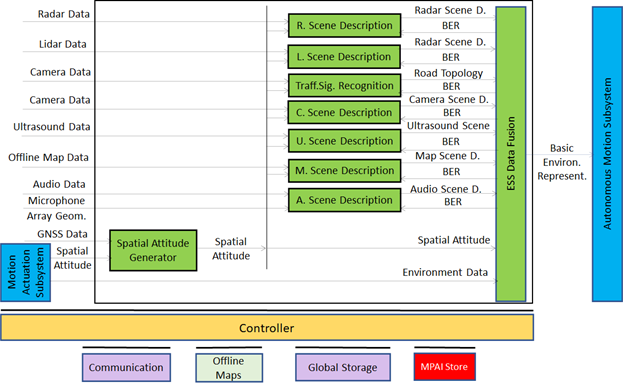

The goal of the Environment Sensing Subsystem (ESS) depicted in Figure 5 is to achieve the best understanding possible of the external environment – called Basic Environment Representation – using the CAV sensors and passing it to the Autonomous Motion Subsystem.

Figure 5 – Environment Sensing Subsystem Reference Model

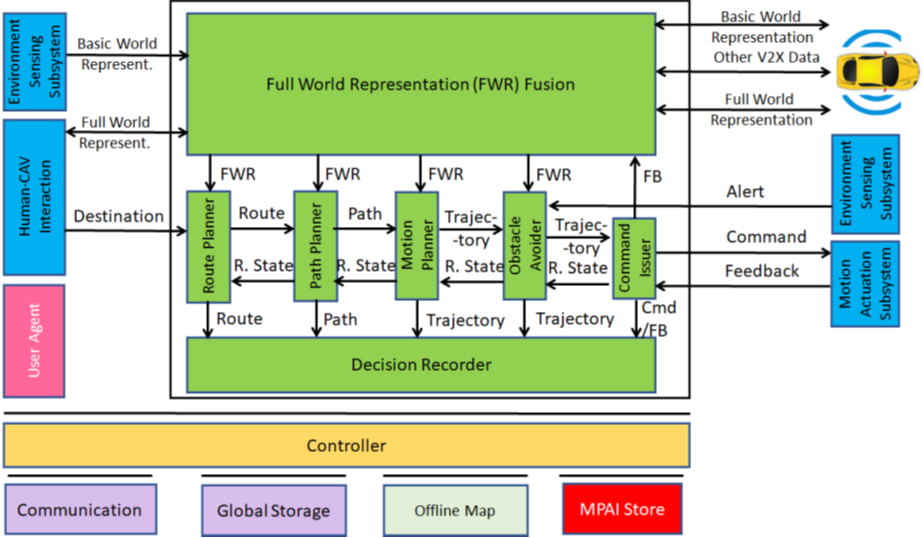

The Autonomous Motion Subsystem, depicted in Figure 6, is the place where information is collected and acted upon. Specifically, the AMS acts upon the instructions given by the human via the HCI subsystem, it produces the Full Environment Representation by improving the Basic Environment Representation with information exchanged with CAVs in range, and issues commands to the Motion Actuation Subsystem to reach the intended destination.

Figure 6 – Autonomous Motion Subsystem Reference Model

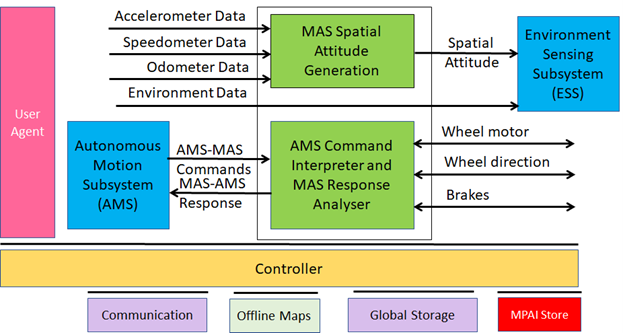

The Motion Actuation Subsystem, depicted in Figure 7, provides spatial and other environment information to the ESS, implements the commands from, and sends information to the AMS about their execution:

Figure 7 – Motion Actuation Subsystem Reference Model

Finally, the CAV-to-Everything (V2X) component allows the CAV Subsystems to communicate to entities external to the Ego CAV. For instance, the HCI of a CAV may send/request information to/from the HCI of another CAV (e.g., about the road status having an impact the travel) or an AMS may send/request the Basic Environment Representation to/from the AMS of another CAV.

The Call for Technologies specifically requests comments on, modifications of, and additions to the MPAI-CAV Reference Model (see Figure 2), the Terminology (extending over 6 pages), the functions of the subsystems, the input and output data of the subsystems, the functions of the AI Modules, and the input and output data of the AI Modules.

Deadline for submission, to be sentto secretariat@mpai.community, is 15 August 2023.

Meetings in the coming July-August meeting cycle

Non-MPAI members may join the meetings given in italics in the table below. If interested, please contact the MPAI secretariat.