| Function | Ref. Model | I/O Data | SubAIMs | JSON MData | Profiles | Ref. Software | Conformance | Performance |

1 Functions

The Context Capture (PGM‑CXC) AIM is the A‑User’s active perceptual interface to the spatial environment. It collects, fuses, and structures multimodal contextual information – including audio and visual, spatial – and supports runtime reorientation under explicit Human or A-User Control commands.

Context Capture provides initial Audio Scene Descriptors and Visual Scene Descriptors. They include object localisation, user gaze/gesture alignment, and spatial layout information.

Context Capture may be directed by the Human through natural commands (e.g., “look at that corner”, “zoom there”, “follow that object”), which A-User Control sends to appropriate AIMs to translate into perceptual redirection operations. These operations adjust Context Capture’s capture configuration prior to any semantic interpretation.

Specific functionalities

Multimodal Context Acquisition: The Context Capture AIM continuously captures audio, visual, and spatial from the surrounding environment in a given Time Expression.

Audio and Visual Scene Descriptor Generation: The Context Capture AIM generates Audio Scene Descriptors and Visual Scene Descriptors that describe object localisation, spatial layout, User gaze/gesture alignment, and other perceptual features of the environment.

Human‑Driven Perceptual Redirection: The Context Capture AIM supports perceptual redirection when instructed by the Human through A-User Control (e.g., reorienting viewpoint, changing focus, zooming, following a referenced object or region).

Runtime Capture Reconfiguration: The A-User Control AIM dynamically reconfigures its capture parameters (e.g., direction, focus, zoom, sampling region) in response to perceptual redirection commands issued by A-User Control.

Perceptual Grounding for Goal Acquisition: The A-User Control AIM provides perceptual descriptors that enable spatial grounding of Human expressions involving referenced objects or regions (e.g., “that corner”, “this object”, “over there”).

2. Reference Model

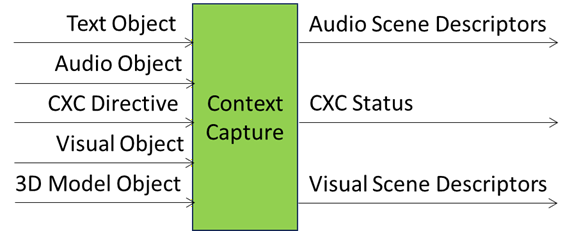

Figure 3 gives the Context Capture (PGM-CXC) Reference Model.

Figure 1 – The Reference Model of the Context Capture (PGM-CXC) AIM

3. Input/Output Data

Table 1 – Context Capture (PGM-CXC) AIM

| Input | Description |

| Text Object | User input expressed in structured text form, including written or transcribed utterances. |

| Audio Object | Captured audio signals from the scene, covering speech, environmental sounds, and paralinguistic cues. |

| 3D Model Object | Geometric and spatial data describing the environment, including structures, surfaces, and volumetric features. |

| Visual Object | Visual signals from the scene, encompassing gestures, facial expressions, and environmental imagery. |

| CCX Directive | Control instructions specifying modality prioritisation, acquisition parameters, or framing rules to guide the perceptual processing of an M‑Location. |

| Output | Description |

| Audio Scene Descriptors | Initial Audio Scene Descriptors (no Enhancement). |

| Visual Scene Descriptors | Initial Visual Scene Descriptors (no Enhancement). |

| CCX Status | Scene‑level metadata describing User presence, environmental conditions, and confidence measures for contextual framing. |

4. SubAIMs

4.1 Reference Model

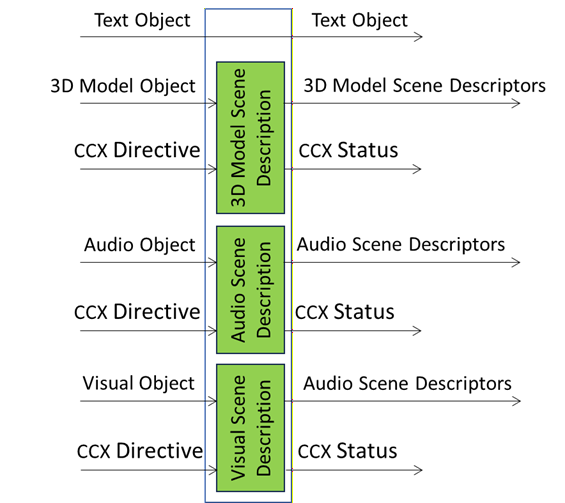

Figure 2 depicts the Reference Architecture of the Context Capture (PGM-CXC) AIM.

Figure 2 – Reference Architecture of the Context Capture (PGM-CXC) AIM

4.2 Operation

The Context Capture (PGM-CXC) AIM is activated by an A-User Control’s CCX Directive and reports on the execution by means of a CCX Status. The four input types are either passed through (Text) or processed by AunAIMs (3D Model, Audio, and Visual).

4.3 Functions of AI Modules

These are the functions specified by 3D Model, Audio, and Visual Scene Descriptors.

4.4 I/O Data of AI Modules

Table 3 specifies the Input and Output Data of the AI Modules.

Table 3 – Functions of Input and Output Data of the AI Modules

4.5 AIMs and JSON Metadata

Table 4 provides the links to the AIW and AIM specifications and to the JSON schemas. AIMs/1 indicates that the column contains Composite AIMs and AIM2 indicates that the column contains their SubAIMs.

Table 4 – AIMs and JSON Metadata

| AIW | AIMs | Name | JSON |

| PGM-CCX | Context Capture | X | |

| OSD-3SD | 3D Model Scene Description | X | |

| OSD-ASD | Audio Scene Description | X | |

| OSD-VSD | Visual Scene Description | X |

5. JSON Metadata

https://schemas.mpai.community/PGM1/V1.0/AIMs/ContextCapture.json

6. Profiles

No Profiles.