Visiting MPAI standards – Connected Autonomous Vehicles (MPAI-CAV) – Architecture

MMPAI-36 (September 2023) has approved the publication of five standards. Two are extensions of already published standards (and adopted by IEEE without modifications) and three brand new. This is an overview of the main content of one of the new standards, Technical Specification: Connected Autonomous Vehicles – Architecture (MPAI-CAV).

MPAI works on CAV standards because replacing current vehicles with CAVs is desirable from many viewpoints. CAVs are expected to offer a safer drive by replacing human errors with machine errors that are expected to be less frequent, allowing more time for rewarding activities, offering opportunities for better use of vehicles and road infrastructure, enabling more sophisticated traffic management, reducing congestion and pollution, and helping elderly and disabled people to have a better life. On the other hand, CAVs are available today more for experimental than regular use also because, unlike the current highly componentised automotive industry, CAV companies are monolithic, and develop internally and assemble all the components they need to make their CAVs and because the level of reliability is insufficient were CAVs deployed in massive numbers.

A CAV standard would allow the acceleration of the maturity of the CAV industry. But which standard? First, the standard MPAI is targeting would not be related to the “hardware” side but to the “software” of a CAV. Second, the standard should not address CAVs in its entirety but adopt a component approach, not unlike what the automotive industry does for the “hardware” side.

A component approach is useful for the two expected stages of the process. The first stage applies now because research can concentrate on a specific component, defined by its function and interface and optimise the component performance, possibly attaching proposed revised functions and interfaces. The second stage applies to the time when CAVs will have reached a sufficient level of performance, and component-based mass production of CAVs becomes attractive. An open market of CAV components can naturally be formed where competing providers can offer components with standard functions and interfaces but with alternative performance compared to what is available on the market at that time.

The MPAI-CAV – Architecture standard

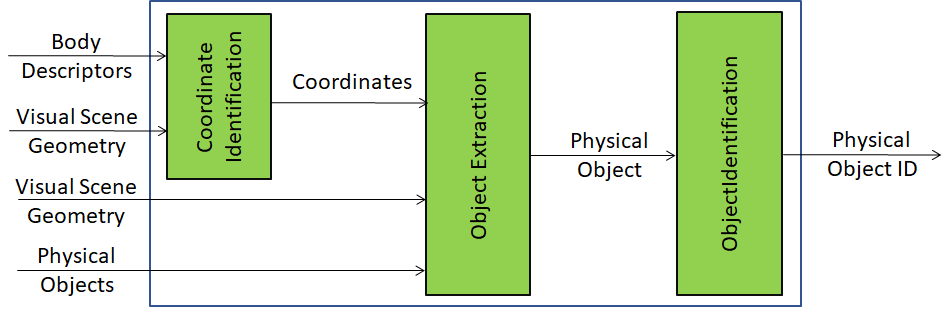

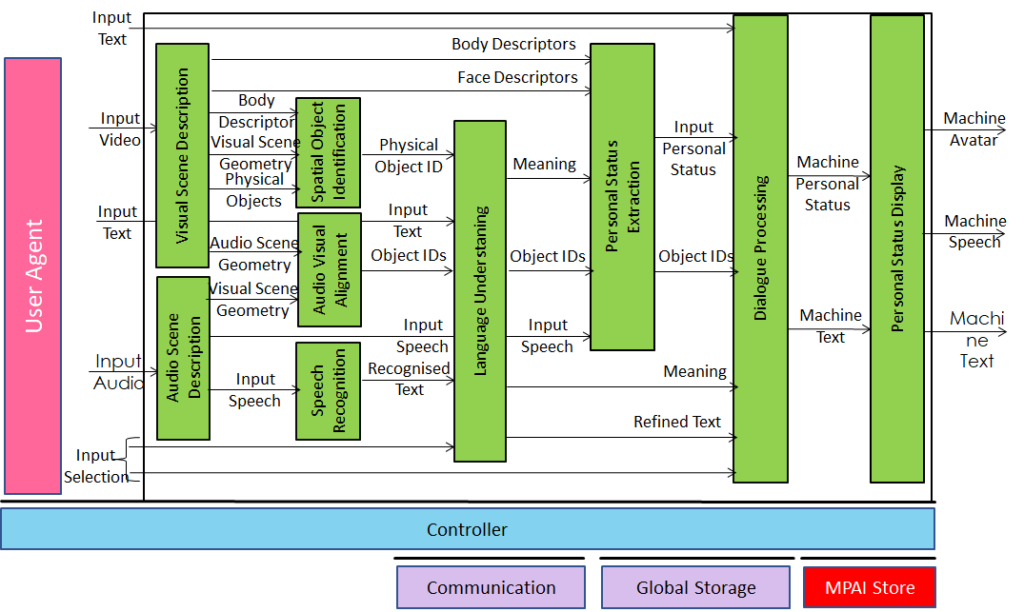

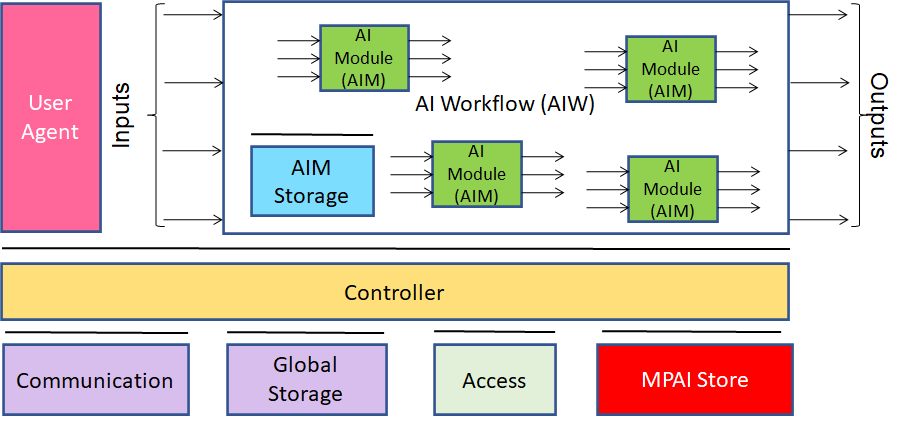

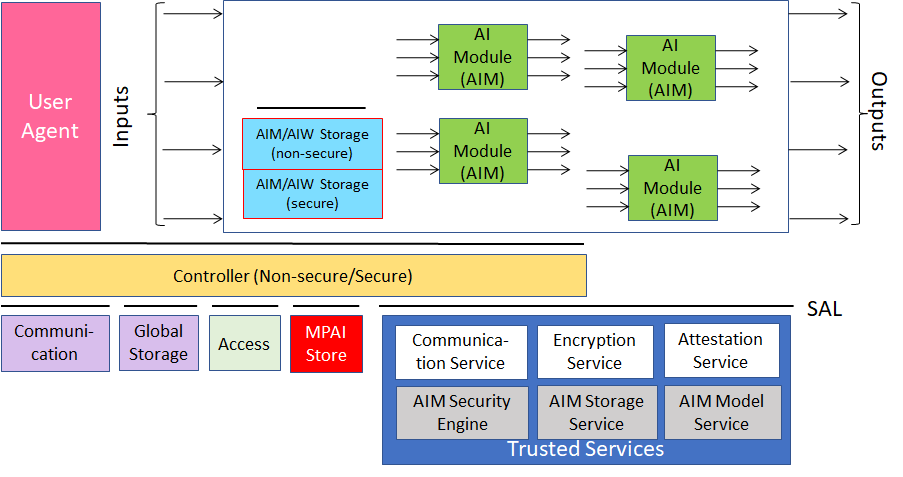

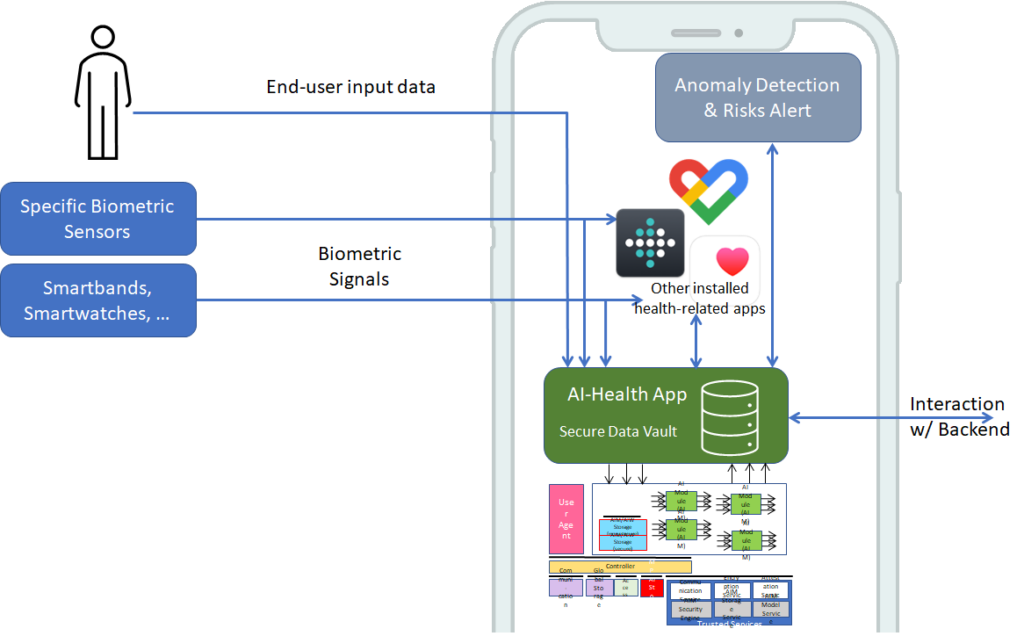

MPAI-CAV – Architecture is a standard implementing the first step of this strategy. It defines a CAV Reference Model composed of four subsystems, each composed of interconnected components. Technical Specification: AI Framework (MPAI-AIF) is the natural selection for implementing this Reference Model. The AI Framework (AIF) specified by the standard is the environment executing AI Workflows (AIW) that correspond to the subsystems of the Reference Model. Each subsystem is composed of components called AI Modules (AIM).

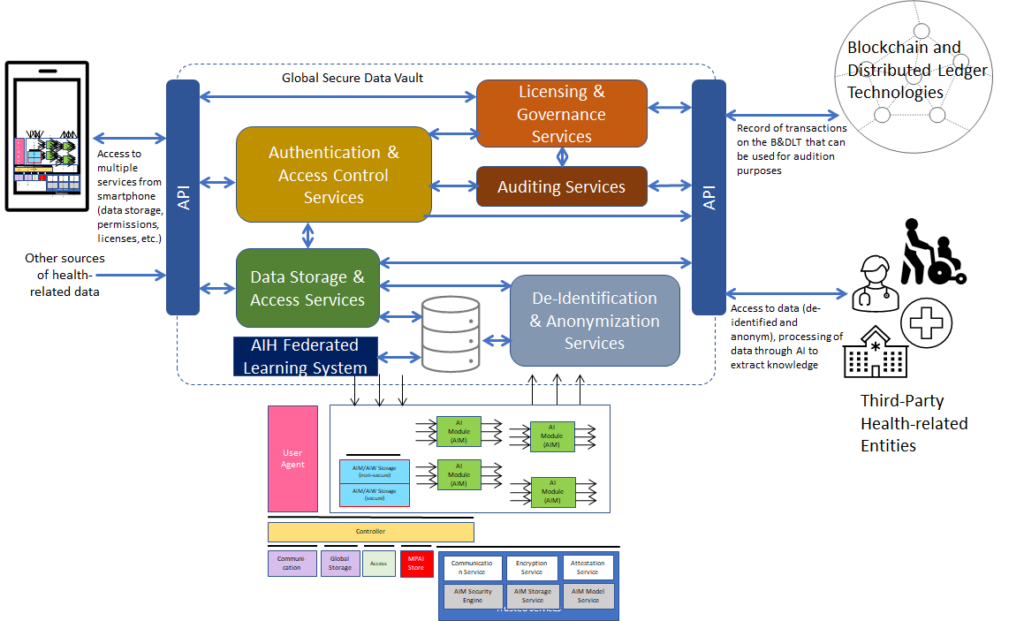

The Subsystem-level Reference model is represented in Figure 1.

Figure 1 – The MPAI-CAV – Architecture Reference Model

There are four subsystem-level reference models, each specified in term of:

- The functions the subsystem performs.

- The AIF-based Reference Model.

- The input/output data exchanged by the CAV subsystem with other subsystems and the environment.

- The functions of each subsystem components to be implemented as AI Modules.

- The input/output data exchanged by the component with other components.

The functions and reference models of the MPAI-CAV – Architecture Subsystems will be presented next.

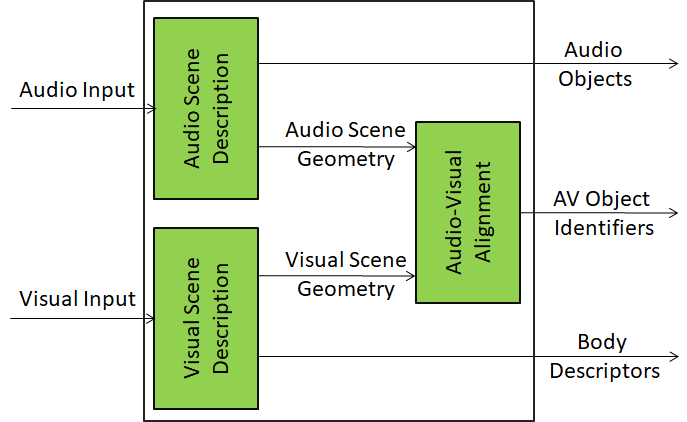

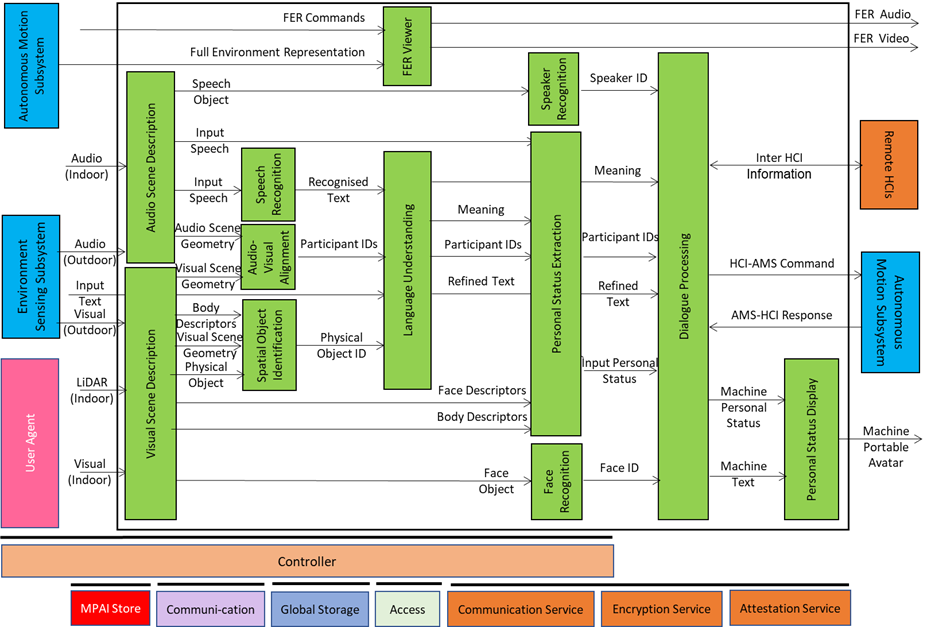

The HCI functions are:

- To authenticates humans, e.g., to let them into the CAV.

- To converse with humans by interpreting utterances, e.g., to go to a destination, or during a conversation.

- To converse with the Autonomous Motion Subsystem to implement and execute human commands.

- To enable passengers to navigate the Full Environment Representation (FER), i.e., the best representation of the external environment achieved by the CAV.

- Appears as a speaking avatar showing a Personal Status, i.e., a simulated internal status of the machine represented according to the criteria used by humans (see https://mpai.community/standards/mpai-mmc/about-mpai-mmc/).

The HCI Reference Model is depicted in Figure 2.

Figure 2 – Human CAV Interaction Reference Model

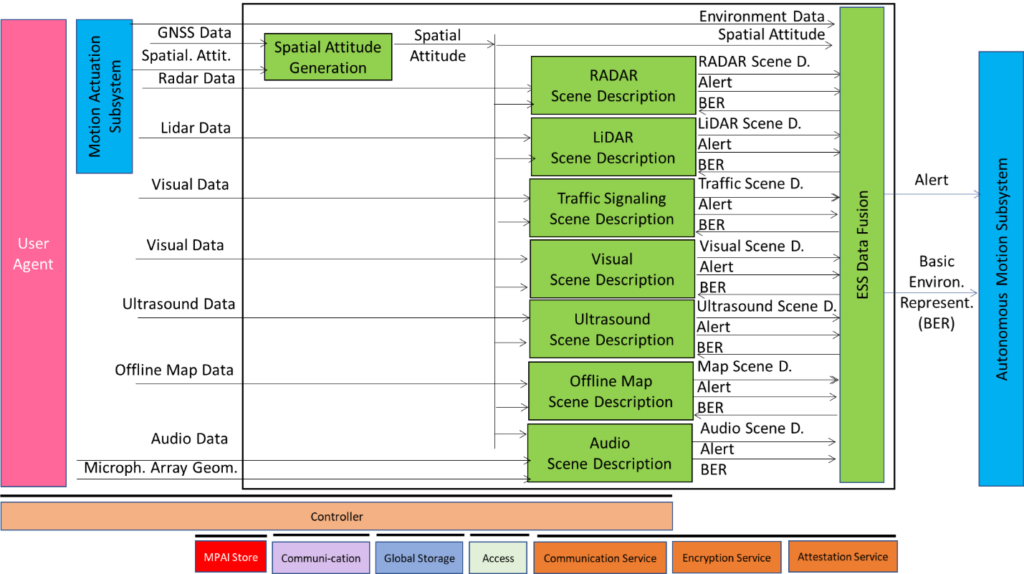

Environment Sensing Subsystem (ESS)

The ESS functions are:

- To acquire Environment information using Subsystem’s RADAR, LiDAR, Cameras, Ultrasound, Offline Map, Audio, GNSS, …

- To receive Ego CAV’s position, orientation, and environment data (temperature, humidity, etc.) from Motion Actuation Subsystem.

- To produce Scene Descriptors for each sensor technology in a common format.

- To produce the Basic Environment Representation (BER) by integrating the sensor-specific Scene Descriptors produced during the travel.

- To hand over the BERs, including Alerts, to the Autonomous Motion Subsystem.

The ESS Reference Model is depicted in Figure 3.ù

Figure 3 – Environment Sensing Subsystem Reference Model

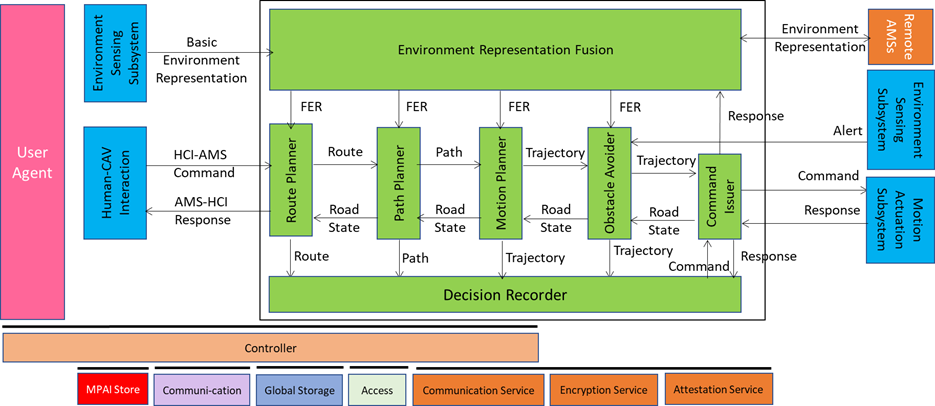

Autonomous Motion Subsystem (AMS)

The AMS functions are:

- To compute and execute the human-requested Route(s).

- To receive current BER from Environment Sensing Subsystem.

- To communicate with other CAVs’ AMSs (e.g., to exchange subsets of BER and other data).

- To produce the Full Environment Representation by fusing its own BER with info from other CAVs in range.

- To send Commands to Motion Actuation Subsystem to take the CAV to the next Pose.

- To receive and analyse responses from the MAS.

The AMS Reference Model is depicted in Figure 4.

Figure 4 – Autonomous Motion Subsystem Reference Model

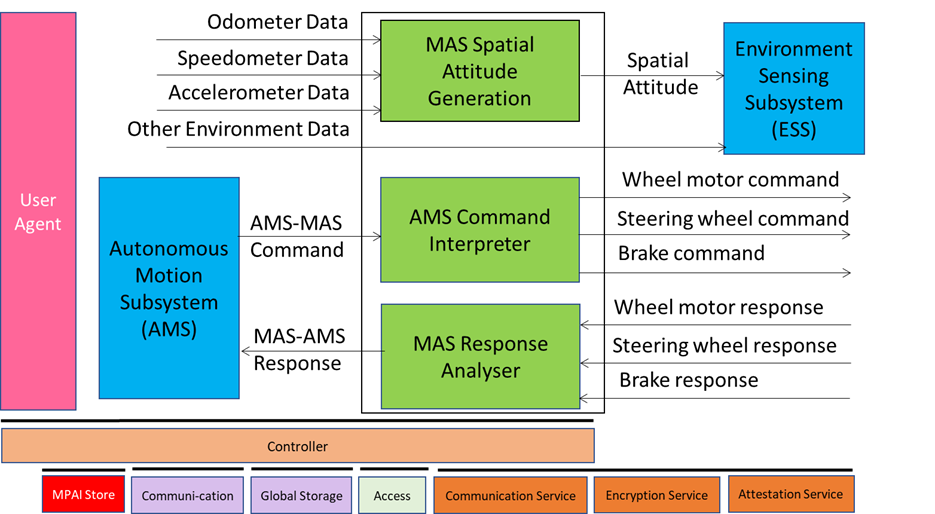

The MAS functions are:

- To transmit spatial/environmental information from sensors/mechanical subsystems to the Environment Sensing Subsystem.

- To receive Autonomous Motion Subsystem Commands.

- To translates Commands into specific Commands to its own mechanical subsystems, e.g., steering brakes, wheel directions, and wheel motors.

- To receive and analyse Responses from its mechanical subsystems.

- To Sends responses to Autonomous Motion Subsystem about execution of commands.

The MAS Reference Model is depicted in Figure 5.

Figure 5 – Motion Actuation Subsystem Reference Model

The MPAI-CAV Architecture standard is the starting point for the next steps of the MPAI-CAV roadmap addressing functional requirements of the data exchanged by subsystems and components