1 Version

V2.1

2 Functions

Emotion-Enhanced Speech (EES):

- Enables a user to indicate a model utterance or an Emotion to obtain an emotionally charged version of a given utterance.

- Converts an individual emotionless speech segment to a segment that has a specified emotion. Both input and output speech segments are contained in files. The desired emotion is expressed either as a tag belonging to a standard list of emotions or derived by extracting features from a model utterance. EES produces an output speech segment with emotion.

3 Reference Model

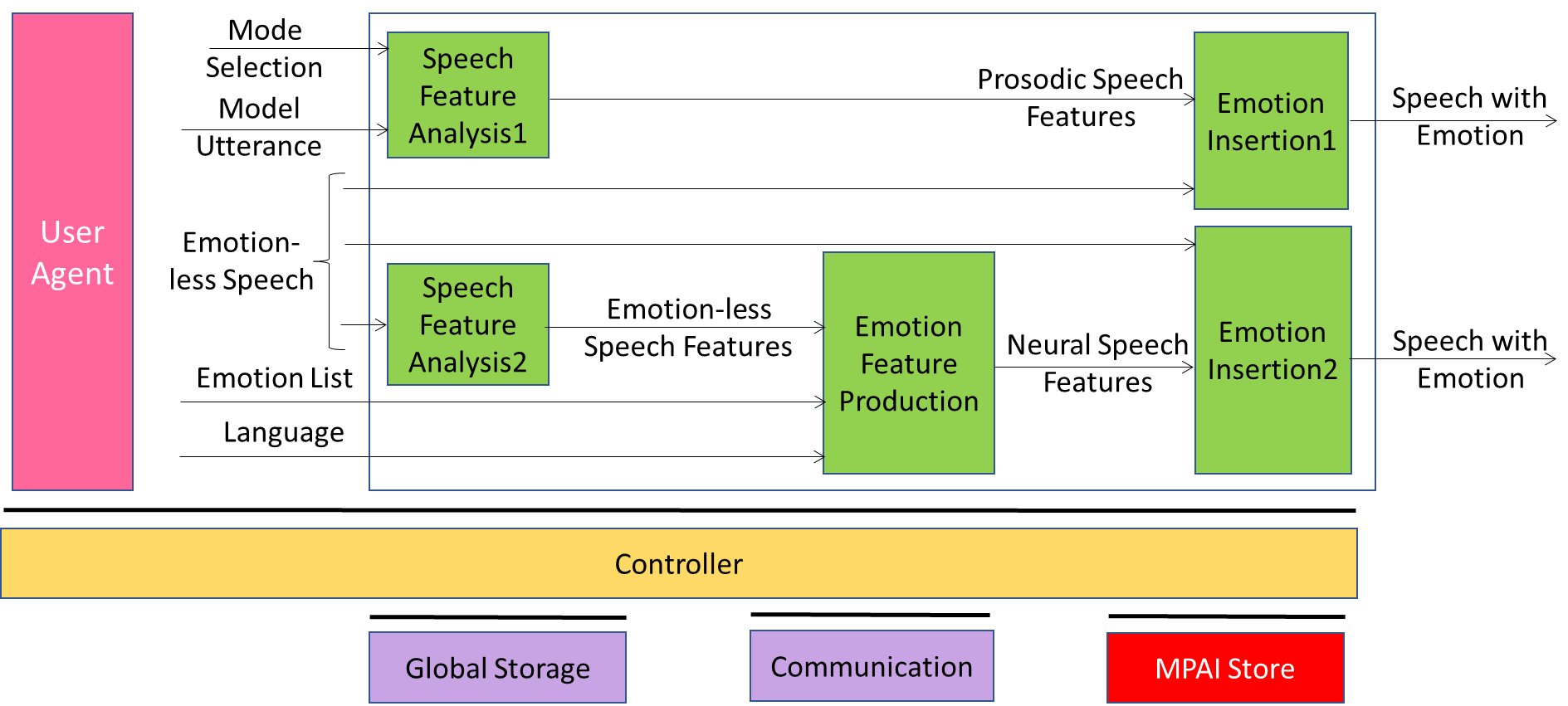

Figure 1 – Emotion-Enhanced Speech (CAE-EES) AIW

The Emotion-Enhanced Speech Reference Model depicted in Figure 2 supports two Modes or pathways enabling addition of emotional charge to an emotionless or neutral input utterance (Emotion-less Speech).

- Along Pathway 1, upper and middle left), a Model Utterance is input together with the neutral utterance Emotion-less Speech, so that features of the former can be captured and transferred to the latter.

- Alternatively, along Pathway 2, middle and lower left), neutral utterance Emotionless Speech is input along with a specification of the desired Emotion. Speech Feature Analysis2 extracts Emotionless Speech Features from Emotionless Speech, which describe its initial state. These are sent to Emotion Feature Production, which produces (emotional) Speech Features2 that can add the desired emotional charge to Emotionless Speech. These Speech Features2 are sent to Emotion Insertion2, which combines Emotionless Speech and the (emotional) Speech Features2 set. Speech with Emotion is then produced as output.

4 Input/Output Data

| Input | Comments |

| Emotionless Speech | An Audio File containing speech without music and other sounds, and in which little or no identifiable emotion is perceptible by native listeners. |

| Emotion | One of the human Emotions. |

| Model Utterance | An Audio Segment used as a model or demonstration of the Emotion to be added to Emotionless Speech in order to produce Speech with Emotion. |

| Output data | Comments |

| Speech with Emotion | Audio containing speech with emotional features. |

5 SubAIMs

| AIMs | Name | JSON |

| CAE-SF1 | Speech Feature Analysis 1 | X |

| CAE-SF2 | Speech Feature Analysis 2 | X |

| CAE-EFP | Emotion Feature Production | X |

| CAE-EI1 | Emotion Insertion 1 | X |

| CAE-E!2 | Emotion Insertion 2 | X |

6 JSON Metadata

https://schemas.mpai.community/CAE/V2.1/AIWs/EmotionEnhancedSpeech.json