| <–Data and data processing | Machine Learning and Neural Networks–> |

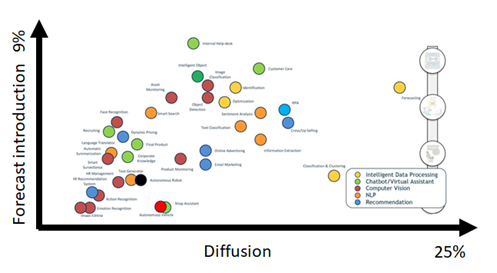

Some may not have realised it, but many AI applications already permeate everyday life and will be able to transform practically all aspects of our life and the economy in general. This is shown in Figure 2 and some of the fields indicated there are analysed in the following.

- Health: analyse large amounts of medical data and discover matches and patterns to improve diagnosis and prevention; develop programs to respond to emergency calls by recognising a cardiac arrest faster than a human operator; develop multilingual textual research tools that will make it easier to find more relevant medical information available.

- Transport: improving the safety, speed, and efficiency of rail traffic, and the initial use of AI for autonomous driving.

- Industry: using robots to bring factories back; sales channel planning and predictive maintenance; collaborative and augmented reality systems to increase worker satisfaction in smart factories

- Agriculture and food supply chain: building sustainable food systems through forms of analytics and monitoring to minimise fertiliser, pesticide, and irrigation use, helping productivity, and reducing environmental impact; monitoring movement, temperature and feeding of livestock.

- Public administration and services: efficiency of natural disaster alert systems to enable prevention, preparedness, and resilience.

Figure 2 – AI application maturity (source: osservatori.net)

| 3.1 | Application of AI to traditional IT |

| 3.2 | AI as a business opportunity |

| 3.3 | AI: a very wide field |

| 3.4 | Dealing with AI: high level of expertise |

| 3.5 | Defining standards for AI use |

| 3.6 | The right to know it is AI |

| 3.7 | Is AI a new speculative bubble? |

3.1 Application of AI to traditional IT

AI was born in the 1950s, but it is only today that technological advances in computing power, data availability, and the ability of data analysis to solve complex problems have triggered the creation and dissemination of AI applications. The basic technologies are mature and, through APIs and cloud services, available at an affordable cost. However, a design approach is needed to introduce AI into processes. If up to 10 years ago the barriers to AI introduction in companies were missing tools or inadequate analytical skills, most issues today are not technological, but cultural and the lack of specific skills. According to experts, today 70% of the effort related to an AI project is for process redesign, 10% to algorithms development and only 10% to technology.

AI and ML are therefore lightening the workloads of help desk, cybersecurity, and other typical IT tasks. In 12 out of 13 major vertical industries, the segment that makes the most use of AI is IT, with more than 46% of IT teams at large companies integrating AI into their applications. Therefore, AI technologies present significant opportunities for IT professionals. The ability to implement AI technology and integrate it with other tools and services to achieve maximum business value opens new career paths. But even at the most basic level, AI frees IT professionals from repetitive tasks by allowing them to focus on something of higher value.

But what are the distinctive capabilities of an AI system compared to traditional DP? A traditional system basically performs two functions: data storage and processing, is done by developed and preset programs operating on more or less complex deterministic algorithms and formulas acting on structured databases. On the contrary, an AI system, contrary to the more traditional concept of programming, can process structured and non-structured data to formulate hypotheses based on a knowledge domain on which the system is trained. From the point of view of intellectual abilities, the functioning of an AI is mainly substantiated through four different functional levels:

- Understanding: through the simulation of cognitive capacities of correlation of data and events, AI can recognise texts, images, tables, videos, voice and extrapolate information.

- Reasoning: through logic the systems can connect data collected from multiple sources, through mathematical algorithms and in an automated way.

- Learning: systems with specific features for data input analysis and “correct” return in output. This is the classic example of ML systems.

- Interaction: the way AI works in relation to humans, e.g., Natural Language Processing (NLP), based on technologies that allow human to interact with machines using natural language enables virtual assistants and chatbots.

3.2 AI as a business opportunity

The introduction of AI as a tool for business development is a relatively recent topic for small/medium enterprises, although it has been outstanding for quite a few years. Apart from some specific realities that make this subject their core business (e.g., companies operating in high-tech or in specific sectors, such as those who produce machinery for diagnostics, etc.), most companies find themselves having to consider the adoption of AI by intuiting (correctly) the great potential underlying the technology but failing to identify the contours and, above all, the potential benefits.

Talking to entrepreneurs helps us understand that there are two main questions: first, how AI can help their business, and second, what they need to implement it correctly assessing time, costs, and benefits in the long run. As already stated above, the topic of AI and its impact on society and economic and industrial systems is lively and current, representing, without a doubt, one of the most promising technological facilitators of the digitalisation process. In the current phase of the industrial revolution, a process of digital transformation is taking place whose objective is to provide companies with organisational models and work organisations able to rapidly and continuously introduce new technologies to support process innovation of support entire sectors.

Manufacturing, assembly, and distribution systems have always produced enormous amounts of data and today, thanks to intelligent processing and analysis systems, can be used productively, along the entire value chain and taking on an increasingly decisive guiding role in corporate decision-making systems. The introduction of AI systems in a business process can be compared with the impact that the introduction of mechanisation in industries had in the mid-eighteenth century. A decisive step in many productive sectors that has improved the conditions of workers engaged at the time in repetitive or exhausting work, replacing the human force, or animal, with the mechanical one, generated by combustion from a motor machine, originating a whole series of economic and social changes.

Therefore, AI has the objective, or rather the ambition, to emulate the cognitive abilities of the human being, increasing its effectiveness. A traditional computer system used to perform complex operations and record and store huge amounts of data, performing both tasks at levels that no human mind can approach, both in terms of speed and accuracy. Today, AI can express extraordinary new abilities (such as emulating reasoning, analysing unstructured data, interpreting language, reading images, reading text, being able to reason in probabilistic terms…) that can give the individual, worker and citizen new capabilities and enormous advantages in work or personal life.

AI will change the relationship between human and machine for the better in a perspective of “collaborative intelligence” and this will offer many opportunities. In this sense, as information technology and the processing capabilities of computers and information analysis systems continuously progress, a world in which machines will replace some of the activities now performed by humans is easy to imagine.

For a long time, economists have been wondering what tools to activate to prevent society from evolving towards an increasingly labour-intensive economy – whose evolution is today accelerated by AI – resulting in an impoverishment of the population. Alongside these social issues, there are ethical questions about the development and evolution of AI and new technologies. The fears might seem excessive but underestimating the impact of AI could be the number one risk. In fact, despite the rapid ongoing evolution, machines as well as expert systems will continue to be at the humans’ side, as assistants with respect to the tasks to be performed, whether they are in a factory or integrated into our daily lives.

3.3 AI: a very wide field

Today, there are several noteworthy use cases in many fields and industries such as healthcare (Intelligent Health), finance (Financial Trading), automotive (Connected Cars, Autonomous Vehicles), as well as scenarios that are now commonly used such as security and emergency services (Image Processing, Computer Vision, Object Detection and Recognition) and also with regard to marketing personalisation (Natural Language Processing for Sentiment Analysis).

If we move on to AI in the industry, for some time now automation has brought robots to perform complex jobs at very high efficiency in assembly lines, but these robots are often made to perform a single task and the cost of reprogramming is very high, if even possible. In fact, this is automation, not AI, but we are already thinking about and designing the first adaptive and collaborative robots, equipped with AI that can learn different tasks through learning by demonstration and with hardware that is better suited for task re-configurability. Adaptive manufacturing is also strategic for industry and requires AI solutions that can adapt to different human scenarios and flexible IT infrastructures that can adapt accordingly with the people.

In manufacturing plants, ML is increasingly being used for anomaly management and predictive maintenance. The next step is to have production systems able to intervene autonomously based on experience and that are not limited to intervening on the system when thresholds are exceeded, and according to pre-established rules, but that learn from previous analyses and create the representation of the production process according to “non-programmed” variables. This is the type of intelligence required for the interaction of complex integrated automated systems, for example to ensure the operation of a highly automated extended supply chain and is one of the main areas of investment for many companies.

Indeed, there are use cases and industry scenarios where AI brings tangible and quantifiable benefits such as, for example, workforce support (skills rotation), process automation (working capital), customer management (customer loyalty) and product innovation (servicing of products). Now we should realise that over the next few years, it will be fundamental to clarifying and regulate the mission and areas of use, especially from an ethical perspective.

Trust toward AI will be earned over time as it is with human relationships. It will need to be demonstrated that it is not a “humans vs. machines” struggle by moving beyond fears and clarifying doubts about adoption through actual accomplishments. AI can complement the work of humans, increasing judgment and analysis and skills, allowing resources to be focused so as to significantly expand ingenuity, creative effort and experience, and improve speed, scope and efficiency.

The correlation with AI seems to be clear if we start from 6 technological areas, widely reported in the literature:

- Automation: in this context, this term is used to indicate any solution that allows humans to be relieved of repetitive actions, even complex ones, but which are easy to be implemented by a computer or mechanical system. Often, we talk about “industrial automation” referring to technologies that allow the automation of part or of full production processes. We can associate the term automation also in non-industrial contexts, as in service delivery processes, logistics and asset tracking. The introduction of AI opens new frontiers in the field of automation, making it possible to address processes even with a complex decision-making component.

- Computerisation: the introduction of software applications and necessary infrastructure, computers, servers, and networks within a company to automate or make processes more efficient. Computerisation is a basic requirement for exploiting AI potential, because it leads to producing and collecting structured and unstructured data from which AI can extract useful information to improve processes or define new business models.

- Dematerialisation: the replacement of paper with digital documents, not only because of the computerisation process, but as a driver to revisit business processes and make them more efficient, effective, and secure. Tracking tools and the protection of access rights to digital documents, also in relation to the new European General Data Protection Regulation (GDPR) regulation, are now an essential tool.

- Virtualisation: in general, it can be defined as the set of technologies that makes the most of the processing capabilities of a hardware system. In a more technical way, the ability to abstract the hardware resources (CPU, RAM, and Storage) makes them available to the software in a virtual way. With virtualisation technologies, a single physical server can simulate the execution of multiple virtual servers that work simultaneously using hardware more efficiently.

- Cloud Computing & Big Data: by Cloud Computing we mean the ability to use hardware and software resources with a “pay-per-use” logic. The potential of cloud computing goes beyond mere economic efficiency because it can deal with sudden increases in processing needs, mechanisms to increase the availability of services (Disaster Recovery and Business Continuity) and guaranteed levels of support for the application context of reference. Big Data is the set of technologies and methodologies able to process huge amounts of structured and unstructured data and to extract useful information for business decision-making processes.

- Mobile: one of the most disruptive changes of recent years is the spread of mobile devices in daily life and business. The evolution of wireless telecommunications networks and the upcoming introduction of 5G in mobile telephony represent some of the most important factors in the digital transformation processes of companies. The impact in the business world is extended to all areas, e.g., smart working and the Internet of Things. AI is already a reality, e.g., virtual assistants that are now on all smartphones and the facial recognition techniques to protect access to devices.

3.4 Dealing with AI: high level of expertise

Introducing AI in a company does not necessarily mean using technology accessible only to a few experts or burdening the existing infrastructure (which typically manages the day-by-day business) with onerous changes, but rather relying on existing data and infrastructure to maximise its value. One of the general initiatives in AI is the simple virtual assistant or chatbot, which can be used in the company without IT department support. Complete predictive maintenance systems collect real-time data on the operation of machines and can predict, for example, production breaks or irregularities, thus enabling timely actions across the entire chain of material, spare parts, movements etc. Therefore, the system understands the features to be introduced and how to manage over time this new way of governing business processes.

AI is an interdisciplinary phenomenon where, alongside technical figures who are experts in specific disciplines such as data science, in general transversal figures such as psychologists, anthropologists, sociologists, linguists, and humanists play fundamental roles. They should be able to improve the interaction between AI and its users, which will become increasingly complex because they can handle language, and emotions from face, gestures, and body.

The world of work is already affected and will be more so in the future by a profound transformation and, in the short term, we will see the emergence of new professions, while the existing ones will be extensively modified by the introduction of new processes and methodologies. Above all, manufacturing companies are called upon to develop technical skills to allow work on appropriate manufacturing processes and products by implementing pilot initiatives, in a short time and with limited resources. In addition, each worker needs to develop attitudes related to their ability to work in a context where people and machines are connected, and continuous learning throughout their working life is a priority.

This is accompanied by the ability to experiment, find, and learn independently to carry out one’s own activities and experiments, both in a team context and independently, as one of the particularities of AI is precisely democratising access to technology for all individuals. Among the new professions that will be created by the increasing adoption of AI within production processes, we can identify three: Trainers, Explainers and Sustainers [1].

- Trainers: are in charge of correcting and addressing AI-based services when interacting with humans in complex and frustrating situations, to add understanding and empathy to the conversation.

- Explainers: fill the gap between technologists and business leaders in the understanding of highly complex systems considered as “black boxes” because they hide the logic with which they suggest actions. The ability to analyse the rationale that led to a potentially harmful suggestion will be required.

- Sustainers: ensuring that the systems behave according to the specifications based on which they were designed and trained, taking immediate corrective action in case of abnormal activities.

3.5 Defining standards for AI use

To enable a single digital market, standards for AI uses that are ethical, democratic, and inclusive are required. These standards should include rules for a safe and reliable AI where obligations, requirements and parameters are defined and universally shared. The goal is to arrive at the determination of the most transparent uses possible, validated in advance for market access according to requirements. Guidelines with mandatory steps must determine AI systems according to the following parameters:

- Risk assessment and mitigation systems.

- High quality data sets to train the AI system.

- Recording of AI systems activities to ensure traceability of results.

- Detailed documentation, providing all necessary information about the system and its purpose, so that authorities can assess its compliance and provide information to users that is clear and to the point.

- Adequate human surveillance to minimise risk is provided.

3.6 The right to know it is AI

The development of AI and automated decision-making does present challenges to consumer trust and well-being: when interacting with these systems, consumers should be properly informed so that they can decide about their use. Relying on AI carries risks, especially when it has the power to make decisions without human supervision. ML is based on training that relies on specific data sets. However, the data sets can reflect social biases, and in that case, AI incorporates those same biases into its own decision-making process. AI is increasingly being used in the design of decision-making algorithms. Decisions made by algorithms can have a significant impact on people’s lives: from granting credit, getting a job or medical care to influencing the outcome of court judgments.

In some cases, automated decision-making processes risk perpetuating the social gap. Some algorithms, for example, have been shown to discriminate against women: these are AI systems used in the human resources departments of some companies that give priority to male or promotion over female employees because of historical biases in the data they use to decide.

Therefore, authorities of many countries have acted, providing legislative guidelines aimed at ensuring that:

- Consumers are protected from unfair and/or discriminatory business practices, or risks arising from commercial AI services.

- AI-based decision-making processes are transparent.

- Only non-discriminatory, high-quality data are used in automated decision-making systems.

3.7 Is AI a new speculative bubble?

When a phenomenon like AI grows so much, there is always fear of a “new winter” with the accompanying bursting of the bubble, i.e., a period in which funds and interest in the sector vanish.

Since the 1950s, research in the sector has followed a regular pattern: moments of enthusiasm followed by periods of mistrust. The term “AI winter” appeared for the first time in 1984 and used to explain the 1970s decline in funding. A few years later, in fact, the industry began to collapse, making the 1980s a long “winter”.

Today, however, conditions have totally changed and the spectre of a decline in the sector seems almost impossible. There are those maintaining that all the promises made so far are in fact illusions, next to those who reel off a series of data proving the contrary. If we gather top experts’ opinion in the field, though, this winter seems far from coming. This doesn’t mean that researchers around the world ignore the current debate, but rather look at it considering data. The World Economic Forum (WEF) mentions that AI specialist and ML specialist are among the leading jobs of the next five years. The WEF further estimates that public funding from now until 2025 in the United States will be over six billion USD, while in China it already exceeds ten billion USD in annual investment. All of this bodes well for the future and should remove fears of another AI winter, where the only real risk is underestimating