Personality Alignment: The Style Engine of A-User

Personality Alignment is where an A-User interacting with a User embedded in a metaverse environment stops being a generic bot and starts acting like a character with intent, tone, and flair. It’s not just a matter of what it utters – it’s about how those words land, how the avatar moves, and how the whole interaction feels.

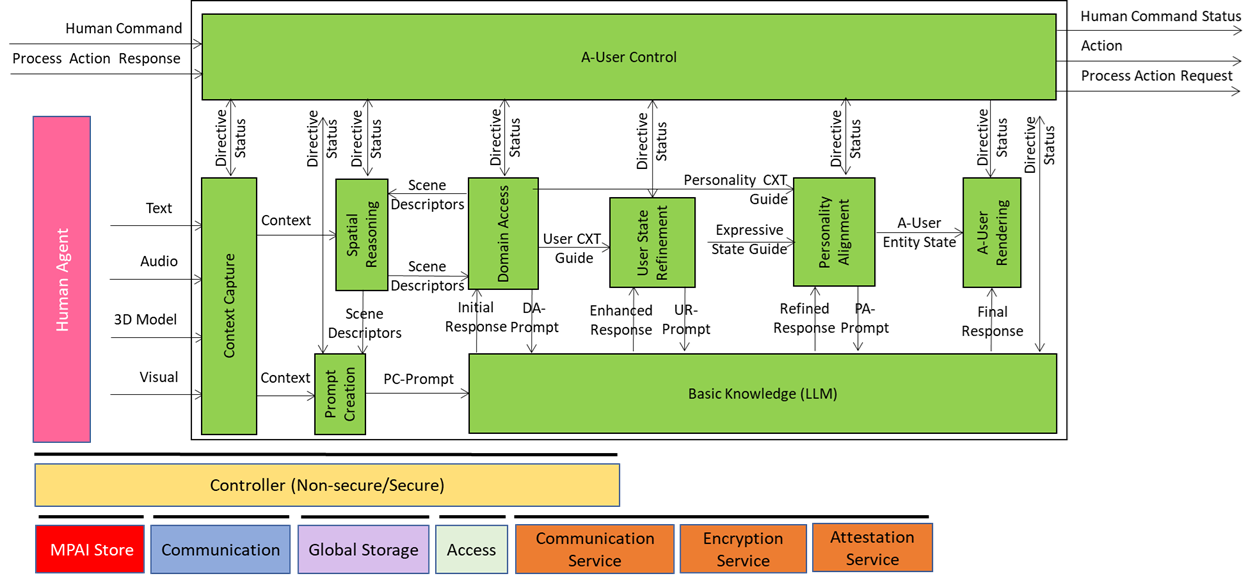

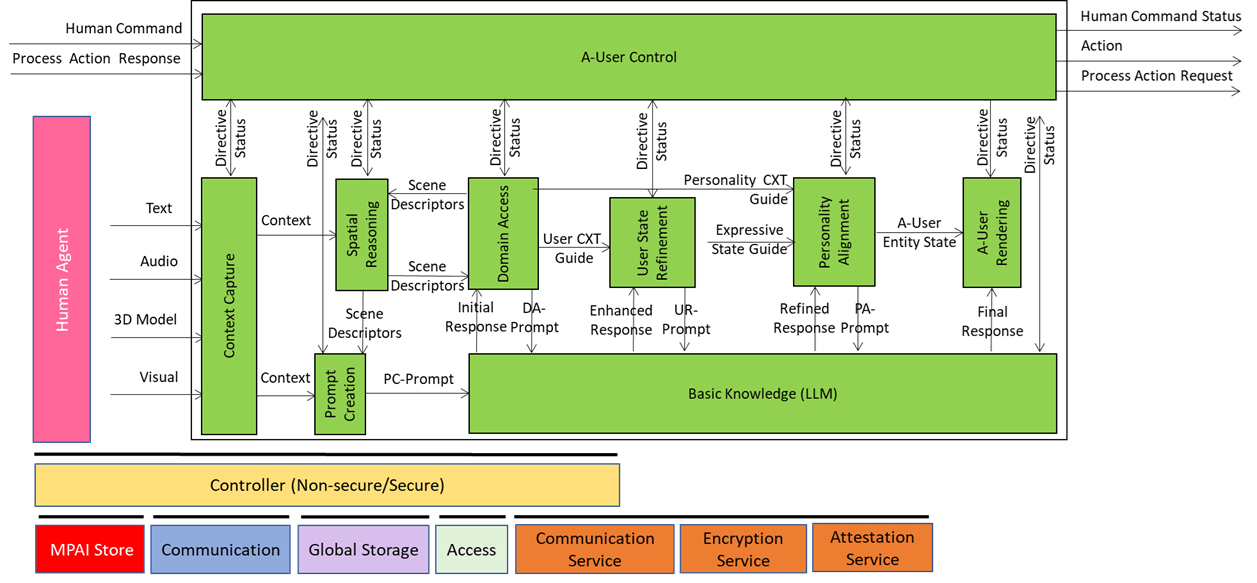

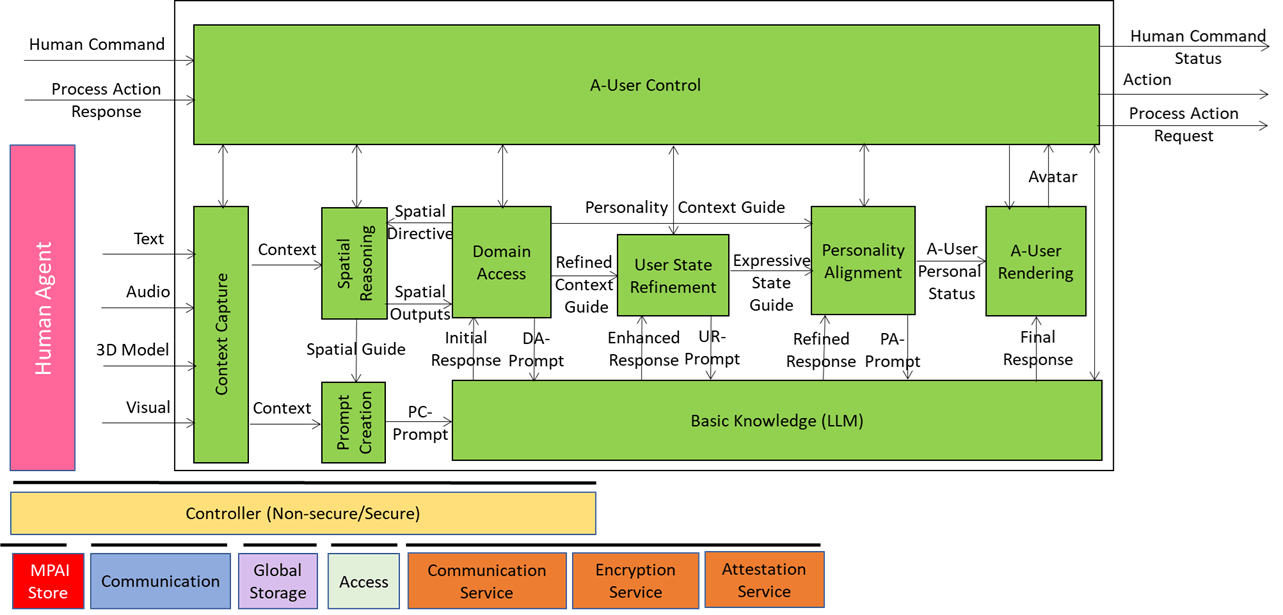

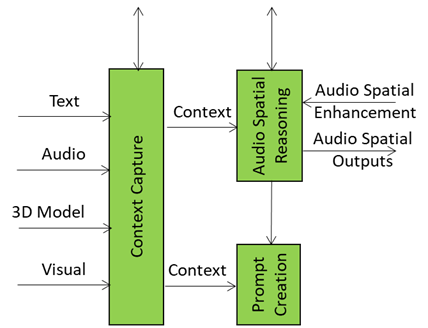

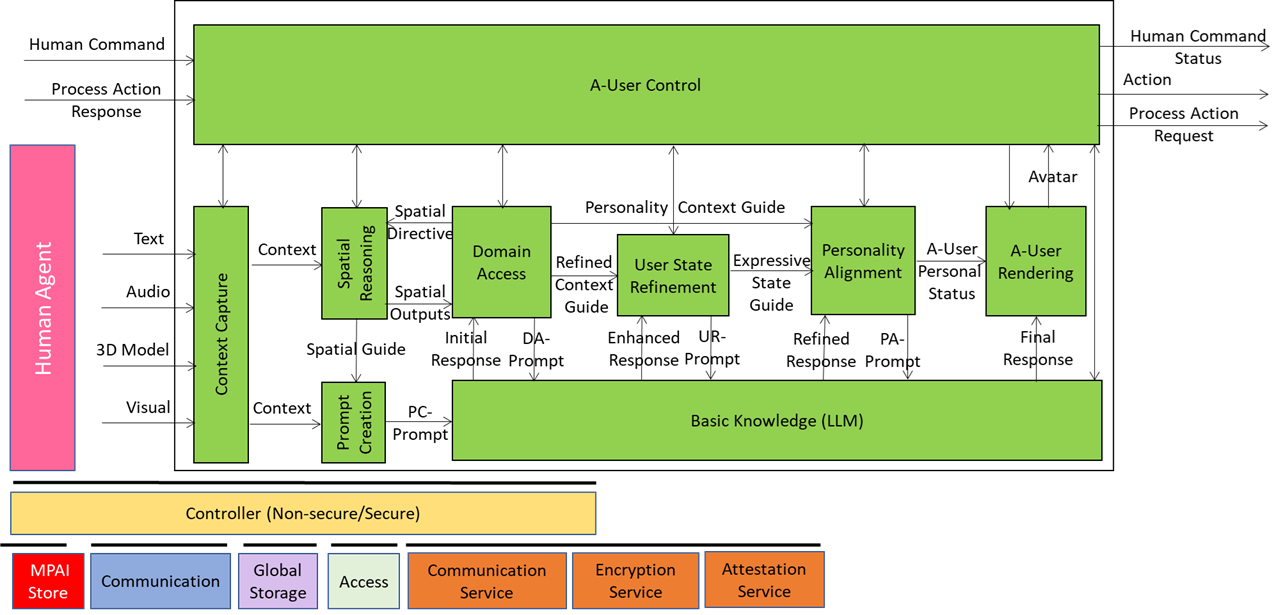

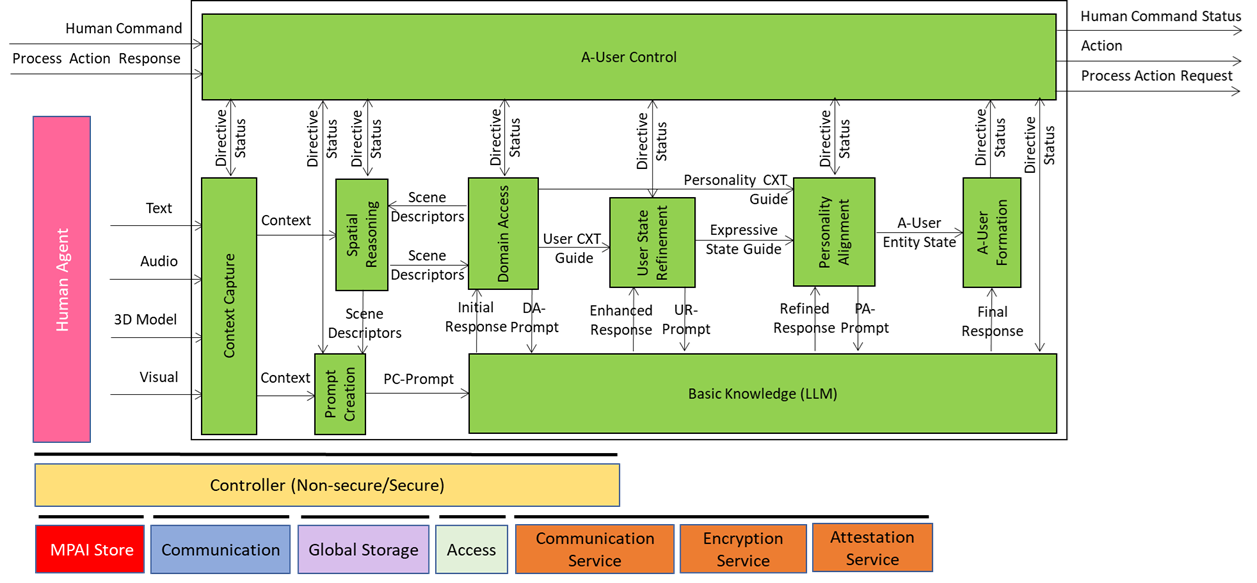

We have already presented the system diagram of the Autonomous User (A-User), an autonomous agent able to move and interact (walk, converse, do things, etc.) with another User in a metaverse. The latter User may be an A-User or be under the direct control of a human and is thus called a Human-User (H-User). The A-User acts as a “conversation partner in a metaverse interaction” with the User.

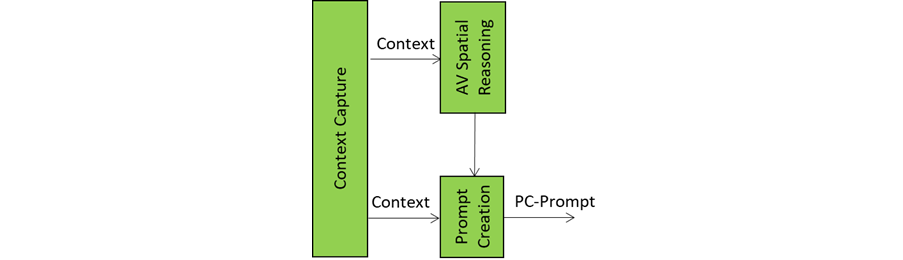

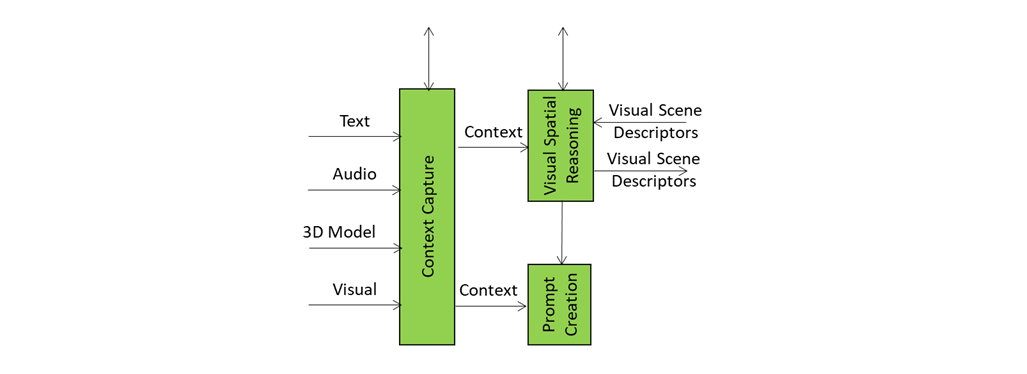

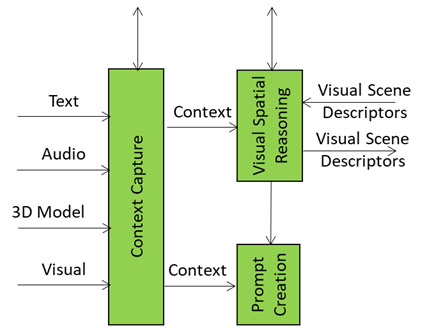

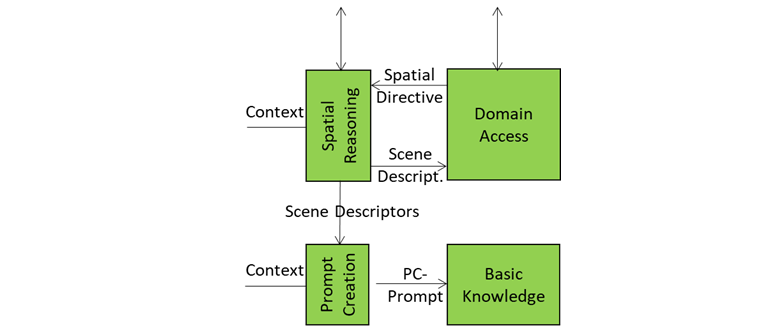

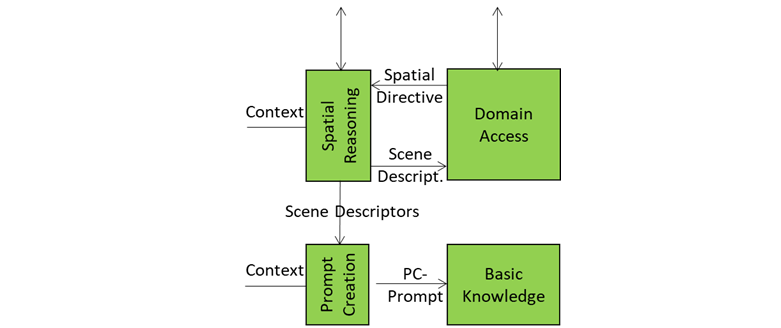

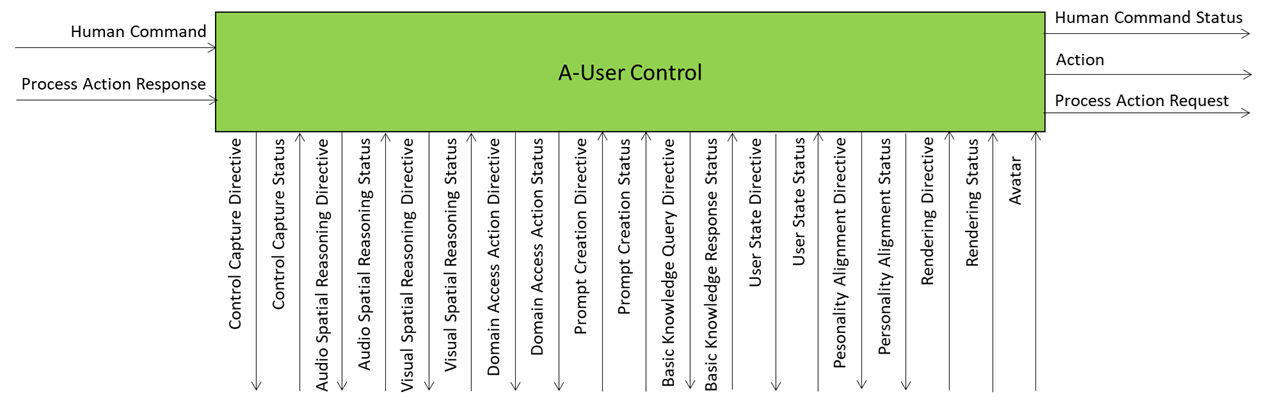

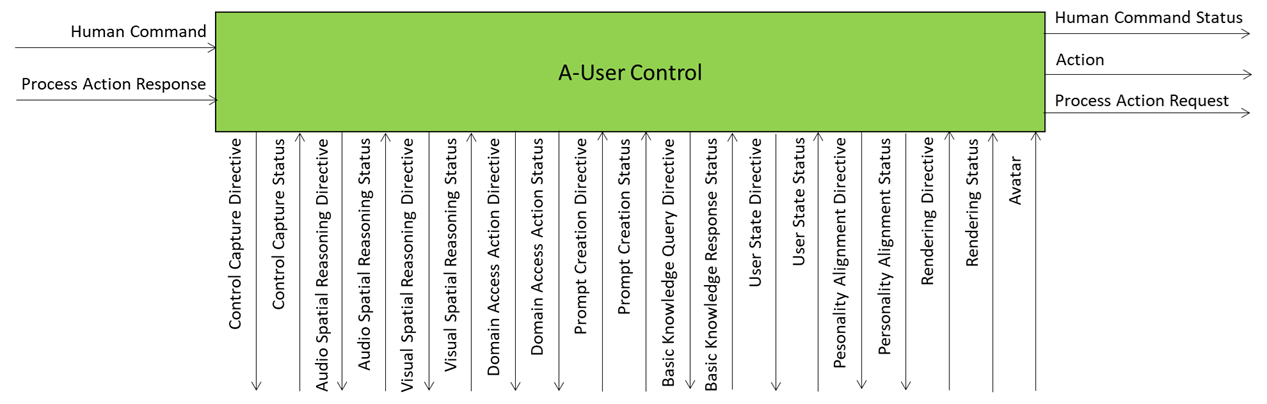

This is the ninth of a sequence of posts aiming to illustrate more in depth the architecture of an A-User and provide an easy entry point for those who wish to respond to the MPAI Call for Technology on Autonomous User Architecture. The first six dealt with 1) the Control performed by the A-User Control AI Module on the other components of the A-User; 2) how the A-User captures the external metaverse environment using the Context Capture AI Module; 3) listens, localises, and interprets sound not just as data, but as data having a spatially anchored meaning; 4) makes sense of what the Autonomous User sees by understanding objects’ geometry; relationships, and salience; 5) takes raw sensory input and the User State and turns them into a well‑formed prompt that Basic Knowledge can actually understand and respond to; 6) taps into domain-specific intelligence for deeper understanding of user utterances and operational context; 7) the core language model of the Autonomous User – the “knows-a-bit-of-everything” brain, the first responder to a prompt of a sequence of four; and 8) converting a “blurry photo” of the User in the environment taken at the onset of the process into a focused picture.

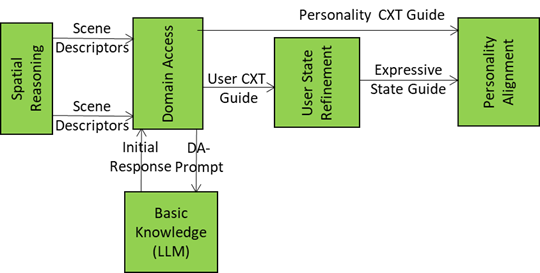

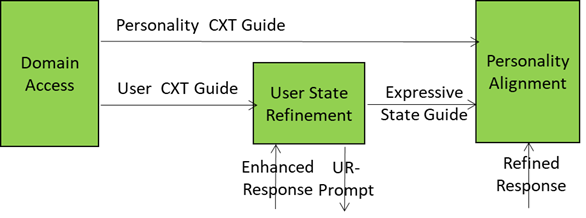

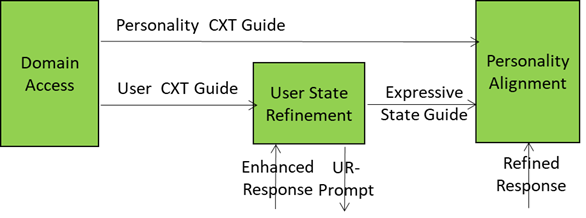

The figure is an extract from the A-User Architecture Reference model representing Domain Access generating two streams of data related to the User and its environment and two recipient AI Modules: User State Refinement and Personality Alignment.

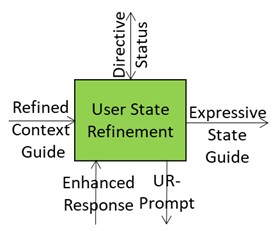

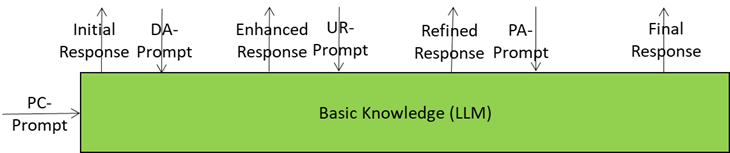

This is possible because the A-User receives the right inputs driving the Alignment of the A-User Personality with the refined User’s Entity State:

- Personality Context Guide: Domain-specific hints from Domain Access (e.g., “medical setting → professional tone”).

- Expressive State Guide: Emotional and attentional posture of the User (e.g., stressed → calming personality).

- Refined Response: Text from Basic Knowledge in response to User State Refinement prompt.

- Personality Alignment Directive: Commands to tweak or override the personality profile (e.g., “switch to negotiator mode”) from the A-User Control AI Module (AIM).

A smart integration of these inputs enables the A-User to deliver the following outputs:

- A-User Entity State: the complete internal state of the A-User’s synthetic personality produced (tone, gestures, behavioural traits).

- PA-Prompt: New prompt formulation including the final A-User personality (so the words sound right).

- Personality Alignment Status: A structured report of personality and expressive alignment to the A-User Control AIM.

Here are some examples of personality profiles that Personality Alignment could use or blend:

- Mentor Mode: Calm tone, structured answers, moderate gestures, empathy cues.

- Entertainer Mode: Upbeat tone, humour, wide gestures, animated expressions.

- Negotiator Mode: Firm tone, controlled gestures, strategic phrasing.

- Assistant Mode: Neutral tone, minimal gestures, clarity-first responses.

Key Points to Take Away about Personality Alignment

- Purpose: Makes A-User’s delivery context-aware and emotionally tuned.

- Inputs: Domain context, user emotional state, refined semantic response, and directives.

- Outputs: Personality blueprint (Entity Status), PA-Prompt for expressive rendering, and alignment status.

- Profiles: For example, Mentor, Entertainer, Negotiator, Assistant – each with tone, gesture style, and behavioural traits.

- Goal: Coherent, adaptive interaction that feels natural and persuasive in the metaverse.