1 Version

V2.1

2 Functions

This Use Case addresses the case of a human holding a conversation with a Machine:

- The human converses with the Machine indicating the object in the Environment s/he wishes to talk to or ask questions about it using Speech, Face, and Gesture.

- The Machine

- Sees and hears an Environment containing a speaking human and some scattered objects.

- Recognises the human’s Speech and obtains the human’s Personal Status by capturing Speech, Face, and Gesture.

- Understands which object the human is referring to and generates an avatar that:

- Utters Speech conveying a synthetic Personal Status that is relevant to the human’s Personal Status as shown by his/her Speech, Face, and Gesture, and

- Displays a face conveying a Personal Status that is relevant to the human’s Personal Status and to the response the Machine intends to make.

- Renders the Scene that it perceives from a human-selected Point of View. The objects in the scene are labelled with the Machine’s understanding of their semantics so that the human can understand how the Machine sees the Environment.

2 Reference Architecture

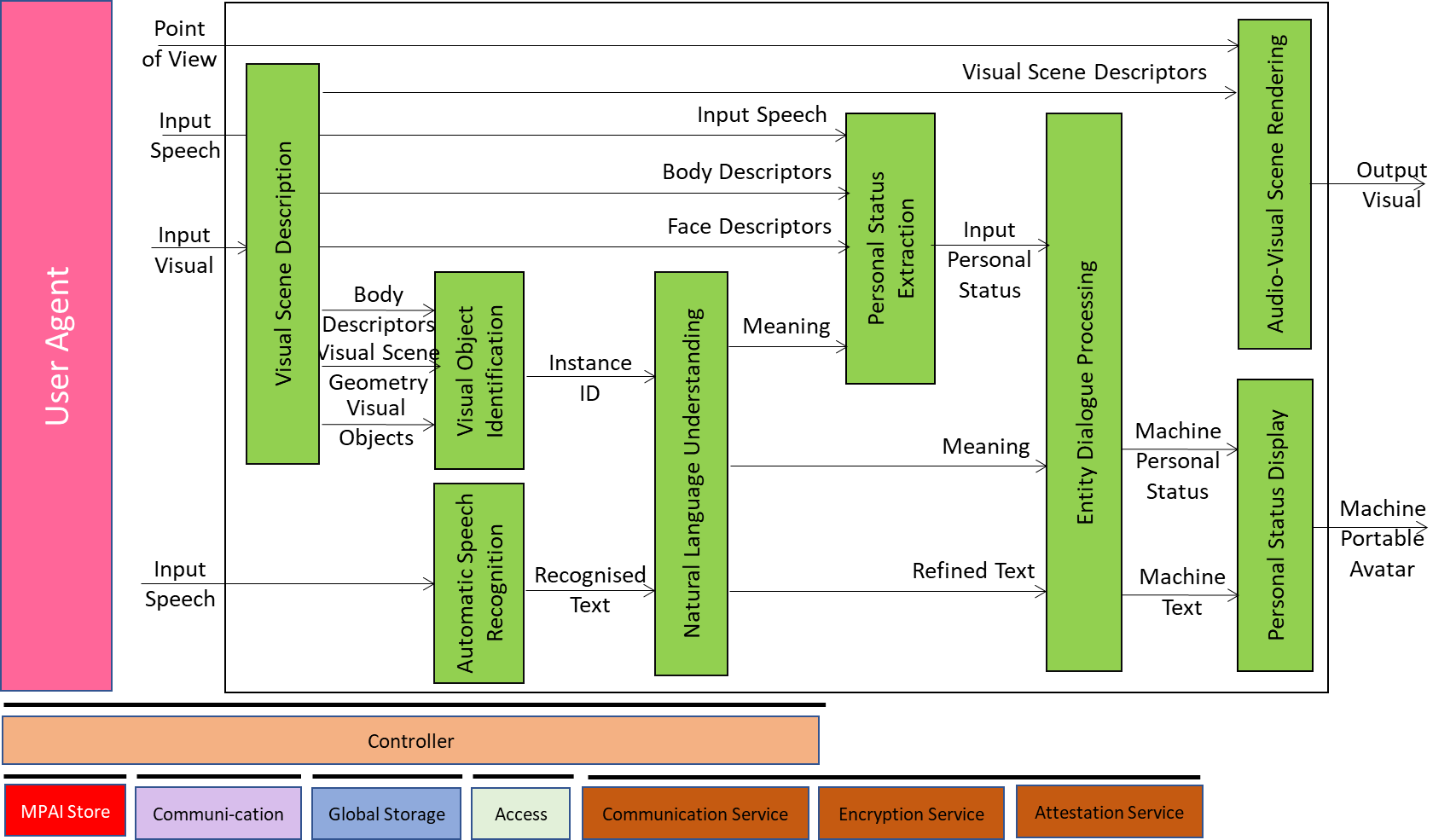

Figure 1 depicts the MMC-CAS Reference Architecture.

Figure 1 – The Conversation About a Scene (MMC-CAS) AIW

4 I/O Data

Table 1 gives the input/output data of Conversation About a Scene.

Table 1 – I/O data of Conversation About a Scene

| Input data | From | Description |

| Input Visual | Camera | Points to human and scene. |

| Input Speech | Microphone | Speech of human. |

| Point of View | Human | The point of view of the scene displayed by Scene Presentation. |

| Output data | To | Descriptions |

| Output Visual | Human | Rendering of the Scene containing labelled objects as perceived by Machine and seen from the Point of View. |

| Machine Portable Avatar | Human | Portable Avatar produced by Machine. |

5 SubAIMs

6 JSON Metadata

https://schemas.mpai.community/MMC/V2.1/AIWs/ConversationAboutAScene.json