<–Definitions Go to ToC Use Cases –>

This Chapter introduces the assumptions that are considered important to facilitate the common understanding and execution of the Metaverse standardisation process.

The Metaverse not just for humans

The Metaverse not an asymptotic point

Representation and Presentation

1 Process

The process of Metaverse standardisation includes the following steps:

- Identification of Use Cases, either existing or possible (Chapter 4).

- Identification of External Services that a Metaverse can use (Chapter 5).

- Identification of Functionalities from Use Cases and External Services (Chapter 6). The presence of a Functionality in does not mean that a Metaverse Instance shall support that Functionality, but that the Functionality is a candidate to be included in one of the Metaverse Profiles.

- Analysis of the state and characteristics of the Technologies required to support the Functionalities (Chapter 7).

- Analysis of the issues regarding the management of the Metaverse Ecosystem and legal issues (Chapter 8).

- Analysis of the issues and the process enabling the eventual definition of Profiles (Chapter 9).

2 Metaverse Specifications

Eventually, the Metaverse Industry will have access to a collection of Interoperability specifications called Common Metaverse Specifications (CMS). It is expected that the CMS will be developed based on an agreed Functionality master plan using contributions made by different Standard Developing Organisations (SDO). The CMS should adopt the “one Functionality-one Tool” principle followed by successful standards, but it can be unavoidable – although not welcome for the eventual success of Metaverse Interoperability – that more than one tool be specified for the same Functionality.

is document is a first attempt at generating such a Functionality master plan. It contains a first set of Functionalities but makes no claim that all have been identified. Further, it and makes no attempt to identify the standard technologies required to build Interoperable Metaverse Instances.

3 Profiling

MPAI assumes that the Metaverse notion will be various implemented as independent Metaverse Instances by selecting specific Metaverse Interoperability levels called Profiles. The identification of entities developing Profiles based on the Common Metaverse Specifications (CMS) is an open issue.

A Metaverse Instance may implement:

- The full set of CMS tools. In this case, the Metaverse Instance will be able to interoperate with any other Metaverse Instance. Obviously, the Metaverse instances not supporting the full CMS tool set will non be able to access all functionalities of the first Instance.

- A subset of the Common Metaverse Specifications. This will be possible in three different modalities:

- Without adding technologies. In this case, the Metaverse Instance will be able to interoperate with other Metaverse Instances for the functionalities implemented according to the Common Metaverse Specifications.

- Replacing functionalities supported by the Common Metaverse Specifications with proprietary technologies. In this case, the Metaverse Instance will interoperate with other Metaverse Instances for the Functionalities implemented according to the Common Metaverse Specifications but will not interoperate with other Metaverse Instances for the remaining Functionalities.

- Adding new Functionalities supported by proprietary technologies. In this case, the Metaverse Instance will not interoperate with other Metaverse Instances for such proprietary functionalities.

- No functionality specified by the Common Metaverse Specifications. In this case, the Metaverse Instance will not be able to interoperate with any other Metaverse Instance.

| Note1 | A recognised set of Profiles will help Users understand the level of Interoperability existing between any two Metaverse Instances. |

| Note2 | The authority delegated by the jurisdiction under which the Metaverse operates may:

1. Adopt a “laissez-faire” attitude. 2. Prescribe a minimum level of interoperability, hopefully based on a recognised Profile. |

| Note3 | Adoption of the “Common Metaverse Specifications” need not be subject to legal obligations. |

| Note4 | Developers and deployers of Metaverse Instances should have the freedom to select which Functionalities of their Metaverse Instances should conform with the Common Metaverse Specifications and which will be autonomously defined. Adoption of an Interoperability level should solely be based on business and other public considerations, such as public service features that laws and regulations of different jurisdictions may impose. |

4 Metaverse definition

Because MMM assumes that Metaverse Instances will be developed and deployed based on a Profile selected by the Metaverse Manager, MPAI adopts the following minimal definition of Metaverse:

A Metaverse Instance is a collection of digital environments that are implementations of Common Metaverse Specification Profiles; it is populated by Digital Objects that are representations of either real Objects – called Digitised – or computer-generated Objects – called Virtual – or both.

5 Interoperability

Interoperability is defined as the ability of a Metaverse Instance to exchange and make use of Data from another Metaverse Instance. Interoperability is often a fall-back solution when Metaverse Instances do not share the same Data Formats.

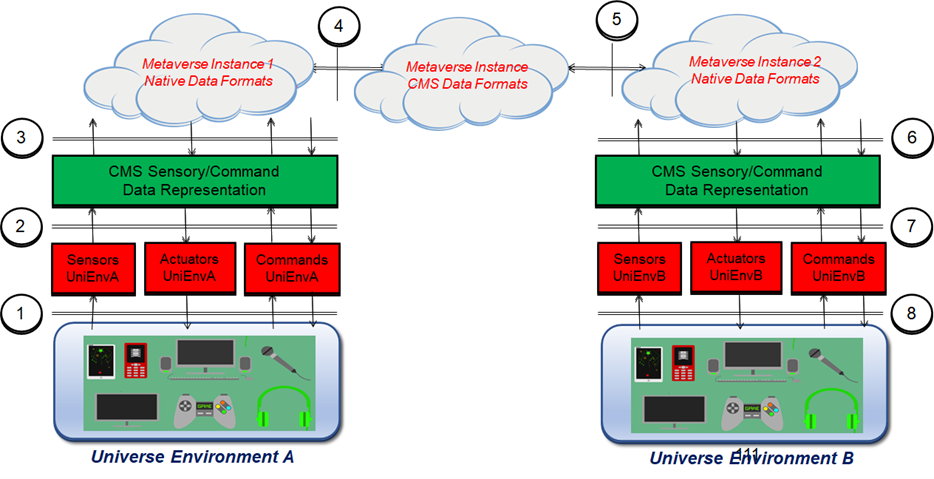

This document adapts the MPEG-V Media Context and Control standard [36] to the current Metaverse context. Although the development of MPEG-V was initiated in the first half of the years 2000’s, the interoperability points identified by it are still relevant and shown in Figure 1 identifying the 8 interoperability points affecting Sensors, Actuators, Commands, and Metaverses.

Figure 1 – Sensor-Actuators-Commands-Metaverse interoperability (MPEG-V)

Figure 1 – Sensor-Actuators-Commands-Metaverse interoperability (MPEG-V)

Table 4 describes the interoperability points where Data in a Format moves from an element to another element having a different Data Format.

Table 4 – MPEG-V Interoperability points

| # | Information moves from | To |

| 1 | Universe Environment 1 | Proprietary Sensors and their Commands 1 |

| 2 | Proprietary format Sensors and their Commands (A) | CMS Sensors and their Commands |

| 3 | CMS Sensors and their Commands | Proprietary Format Metaverse Environment (A) |

| 4 | Proprietary Format Metaverse Environment (A) | CMS Metaverse Environment |

| 5 | CMS Metaverse Environment | Proprietary Format Metaverse Environment (B) |

| 6 | Proprietary Format Metaverse Environment (B) | CMS Sensors and their Commands |

| 7 | CMS Sensors and their Commands | Proprietary Sensors and their Commands 2 |

| 8 | Proprietary Sensors and their Commands 2 | Universe Environment 2 |

The workflow of Figure 1 operates as follows:

- Metaverse Environment 1 internally represents Data based on proprietary Formats 1 using Sensing/Actuation Data and Commands in the CMS Format obtained by converting Sensing/Actuation Data and Commands based on Format 1 from Universe Environment 1. Note that there can be a mismatch between

- The Sensing Data and Commands received from Universe 1 and Metaverse Instance 1 because the Profile it implements may not be able to handle all the Sensing and Command Data types received from the Sensors of Universe Environment A.

- The Actuators of Universe Environment A and the Actuation Data and Commands generated by Metaverse Instance 1 because of their inability to handle the Data types received.

- Metaverse Environment 2 of Metaverse Instance B internally represents Data based on proprietary Formats 2. However, by converting its Data from Data Format 2 to the CMS Data Format, Universe Environment A can send Sensing Data to and receive Actuation Data from Metaverse Environment 2 for use.

- Metaverse Environment 1 can serve Universe Environment B within the constraints corresponding to sub-points a. and b. of point 1 using the process outlined in point 1. above.

Figure 1 also provides a first general identification of Interoperability points potential targets of CMS standardisation.

6 The Metaverse and AI

Artificial Intelligence (AI) includes a range of technologies that are likely to permeate all the meanders of the Metaverse. MPAI, the Moving Picture, Audio, and Data Coding by Artificial Intelligence international, unaffiliated, non-profit organisation developing standards for AI-based data coding with clear Intellectual Property Rights licensing frameworks has developed a strategy to develop AI-based Data Coding standards that assumes that applications be designed as systems composed of AI Modules (AIM) organised in AI Workflows (AIW) executed in a standard AI Framework (AIF).

The AIF, AIW, and AIM capabilities and features are described by standard JSON metadata and the AIF components expose standard API and the AIWs/AIMs expose interfaces whose input and output Data have a standard Format, while the AIM internals are not specified by MPAI. MPAI has already developed several standards in the AI-based Data Coding space [37,38,39,40,41]. MPAI claims that, in general, improved AIM functionalities can be obtained by improved processing technologies exposing standard input/output Data Format interfaces rather than by using proprietary Data Formats.

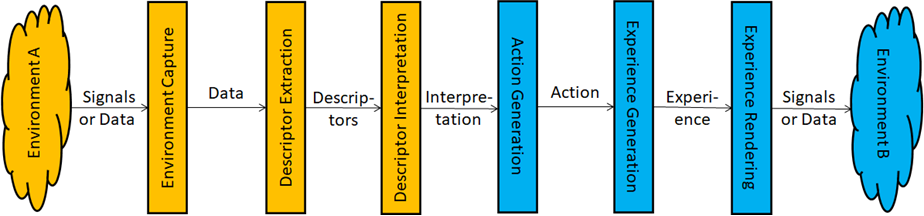

The CMS will include more Data Coding Formats than those identified in Figure 1. Hints at these are offered by another model developed by MPAI where the Interaction between Users of Environments A and Environment B – which can be both from the Universe, from different Metaverse Environments, or one from the Universe and the other from a Metaverse Instance – have Interactions that rely on the analysis performed on the Data exchanged (Figure 2). Note that, for simplicity, only the processing from Environment A to Environment B chain is shown. The reverse chain where Signals or Data of Environment B are captured to influence Environment A is not shown.

Figure 2 – Environment-to-Environment Interaction Model

Table 5 defines the functions of the processing elements identified in Figure 2.

Table 5 – Functions of components in the Environment-to-Environment Interaction Model

| Environment Capture | Captures Environment as collections of signals and/or Data. |

| Descriptor Extraction | Analyses Data to extract Descriptors. |

| Descriptor Interpretation | Analyses Descriptors to yield Interpretations. |

| Action Generation | Analyses Interpretations to generate Actions. |

| Experience Generation | Analyses Actions to generate Environment. |

| Environment Delivery | Delivers Environment as collections of signals and/or Data. |

Note that Figure 2 assumes that Action causes the Experience Generation Module to generate an Experience. This is an important but not necessarily the only case. For instance, in the case of a Connected Autonomous Vehicle, both Environments are the same (in the Universe) and the Action is likely to be a command to actuate the CAV’s motion [42].

7 Organisation

To identify Metaverse functionalities, it is useful to assume that a Metaverse Instance will have:

- A Metaverse Manager owning, operating, and maintaining the Metaverse Instance.

- Metaverse Operators running Metaverse Environments under Metaverse Manager licence.

- Metaverse Partners acting in Metaverse Environments under licence of a Metaverse Operator.

- End Users.

The actual organisation of a Metaverse Instance is likely to take many different shapes enabled by different technologies. For instance, a Metaverse Instance could have just one Metaverse Manager and many Users or just one User or have several Metaverse Operators each with a set of Metaverse Partners. The assumption of this Section is sufficiently general and articulated to be used to represent a significant number of use cases from which functionalities can be identified.

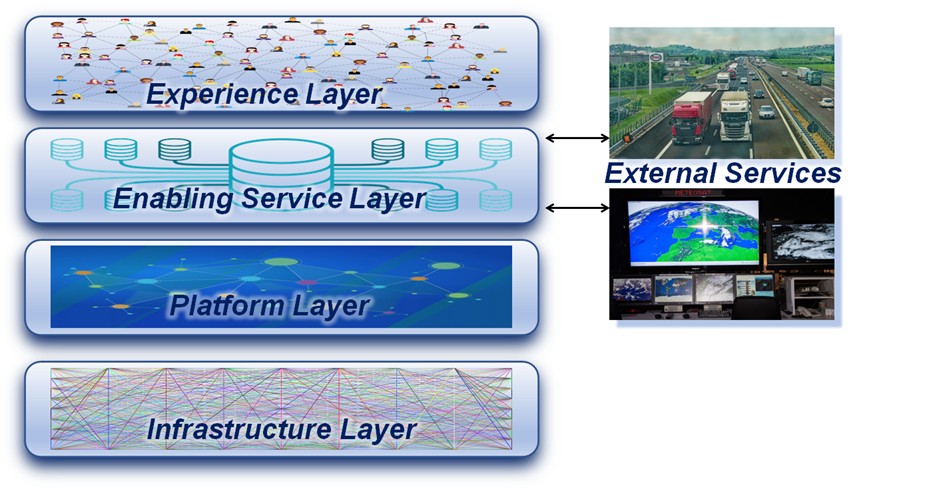

8 Layering

A Metaverse Instance will typically be implemented as a layered structure. Necessary layers are the infrastructure layer and a service layer. In most cases there is also an experience layer. This document assumes that a Metaverse Instance is composed of 4 layers:

- The Infrastructure Layer including the network, transport, storage, and processing (cloud, edge) services.

- The Platform Layer including Metaverse-specific services to create, discover, and access content.

- The Enabling Service Layer including a variety of subsidiary services such as payment, security, identity, and privacy.

- The Experience Layer enabling Experiences enjoyed through Devices.

A Metaverse Instance may also interact with a variety of application-specific services, e.g., traffic information services, weather service, etc. These should not be considered as part of the Enabling Service Layer but simply connected as External Services.

The assumed layered architecture is depicted in (Figure 3).

Figure 3 – Metaverse Layer and External Services

In this context, it is worth mentioning that:

- The word Metaverse Instance will be used to include the full stack of 4 layers. If only one layer, e.g., the Experience Layer is intended, the “Metaverse Experience Layer” expression will be used.

- The layered architecture is introduced for the sole purpose of identifying requirements. The architecture selected for the design and implementation of a Metaverse Instance should be based on the Metaverse Manager’s decision.

- While it is conceivable that parts of the Infrastructure Layer, as defined above, will be a part of a future extended internet, it can be assumed that the other layers will be variously implemented and independently operated, and their technologies will not be part of a future internet. Therefore, we do not expect that there will be a “single Metaverse” that only fully interoperable Metaverse Instances all able to support the same features simply because the functionalities indispensable in one Metaverse Instance may well not be needed in another. A governed Profile approach, however, can guarantee levels of Interoperability between independently developed and operated Metaverses leaving it to entrepreneurs to decide which level of Interoperability is in their interest to offer.

- Instead of a probably unrealistic idea of fully interoperable Metaverses, this document assumes that there will only be Common Metaverse Specifications (CMS) and CMS Profiles.

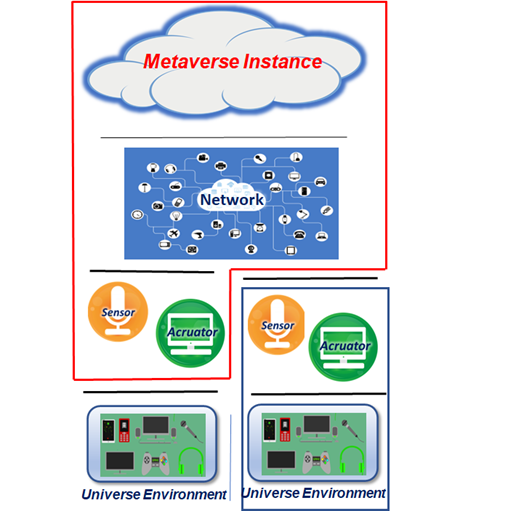

9 The extent of the Metaverse

A Universe Environment accesses a Metaverse Instance via Sensors and Actuators and so does a Metaverse Environment. Figure 4 contemplates two non-exclusive cases:

- The Universe Environment uses its own Sensors and Actuators to access or interact with the Metaverse Environment (blue line).

- The Metaverse Instance is connected to a Universe Environment via Sensors and Actuators that are integral with the Metaverse Instance (red line).

In both cases, Interoperability requires that the Sensor and Actuator have standard Interfaces.

Figure 4 – Connections between a Metaverse Instance and a Universe Environment

10 The Metaverse not just for humans

In its investigations, MPAI is encountering several use cases where the notion of Metaverse is applicable even though the Metaverse Environment does not necessarily include human Users. This is the case, e.g., of a Connected Autonomous Vehicle (CAV) producing, or contributing data to a Metaverse Instance that is intended to be an accurate Representation of the Universe Environment where a CAV happens to be [42]. In this case, the layered architecture of Section 3.8 may very well not include an Experience Layer.

11 The Metaverse not an asymptotic point

While there is consensus that some of the most emblematic functionalities of the Metaverse will become available only after several years of research and development, this does not mean that we should wait for and celebrate the magic moment in which the first Metaverse Instance will be “turned on”. Metaverse Instances exist today that offer significant subsets of the Functionalities identified in this document. While they are not implemented using the Common Metaverse Specifications, they should and will be considered by this document as Metaverse Instances.

12 The Metaverse is Digital

The MMM consistently uses the following adjectives with the meaning below:

- “Digitised” to refer to the data structure corresponding to the digital representation of an object.

- “Virtual” to refer to the data structure created by a computer, as opposed to a data structure entirely originated from an object.

- “Digital” to refer to both “Digitised” and “Virtual”.

13 What is a User

MPAI calls a Metaverse User either a Digitised Human driven by a human, or else a Virtual Human driven by a Process. A Metaverse User can be rendered and perceived as an avatar.

14 Representation and Presentation

The MMM is based on a clear distinction between the way information is digitally represented and the way information is rendered. For instance:

- A Digital Human is a Digital Object suitably represented as bits in a Metaverse Instance.

- An Avatar is a rendered Digital Human and is perceived as physical stimuli.

15 Scene and Object hierarchy

A Universe Environment is perceived as one or more scenes containing objects. When mapping it to a Metaverse Environment, a scene and its objects are digital represented. It is desirable that the format of the Digital Scene Representation be shared by all Digital Scenes, whether they are Digitised or Virtual.

Objects have a digital representation composed of at least four types of data:

- Security Data: Data that guarantees the Identity of an Object.

- Private Data: Object-related Data accessible by specific Users.

- Public Data: Object-related Data accessible by all Users.

- Perceivable Presentation Data: Data used to render the object (e.g., a collection of avatar models that a User selects from as the current Persona).

16 Regulation and Governance

The operation of a Metaverse Instance is typically regulated and governed depending on applicable law. This document assumes that the Functionalities identified are provided for use in conformity with applicable law. Section 8.2 identifies some of the regulatory and governance issues that will likely have to be addressed if Metaverse Instances offering seamless and rewarding Experiences to Users are to become possible.